Created on

Updated on

AI Ethnics(vi): The Labor Trap

The Commodification of Thought and the Erosion of the Cognitive Middle Class

Preface: Co-written with Gemini.

For decades, the narrative of automation followed a predictable script: machines would replace "brawn" (physical labor), while humans would retain the monopoly on "brains" (cognitive and creative labor). We were told that as long as one stayed "up-skilled" in a STEM field or a creative craft, the silicon revolution would be a partner, not a predator. But as we move through 2026, that promise has been exposed as a historical fluke. Large Language Models and Generative AI have triggered the Great Cognitive Displacement.

The Great Cognitive Displacement is the 21st-century successor to the Industrial Revolution, but with a fundamental, unsettling difference. While the 19th century focused on automating "brawn" (physical output), the 2020s are focused on automating the synthesis of meaning. This isn't just about robots moving boxes; it’s about algorithms moving ideas, drafting contracts, and generating code. Unlike the Industrial Revolution, which targeted the factory floor, the AI revolution is aimed squarely at the "Cognitive Middle Class"—the paralegals, junior developers, graphic designers, copywriters, and middle managers who formed the backbone of the modern economy. We are no longer automating the movement of things; we are automating the meaning of things.

The most visible sign of this displacement is what economists call the "Junior Bottleneck." A landmark Stanford study in early 2026, analyzing payroll data from millions of workers, found a startling trend: while employment for senior professionals in AI-exposed fields remained stable or even grew, employment for workers aged 22 to 25 plummeted by nearly 6%. Historically, junior roles were the "training grounds" of a profession. A young paralegal learned the law by summarizing thousands of pages of discovery; a junior coder learned architecture by writing the boilerplate. Today, AI handles these "manageable bits" of work with 99% accuracy in seconds. For a corporation focused on quarterly efficiency, hiring a beginner is no longer seen as a long-term investment, but as an avoidable cost. We are effectively removing the first ten rungs of the career ladder, leaving a generation of "un-apprenticed" workers with no clear path to seniority.

This creates the Seniority Paradox: we have a surplus of high-level "Architects" who understand the big picture, but we have destroyed the mechanism that creates them. In five to ten years, as current seniors retire, who will replace them? You cannot become a master of a craft if you never spent your youth struggling with its basic, "automatable" components. Expertise is built through the very "grunt work" that AI has now commodified. For those who remain in the workforce, the nature of work has shifted from Creation to Curation. Professionals have increasingly become "AI Janitors"—highly skilled individuals whose primary task is to "clean up" the hallucinations, biases, and "workslop" generated by LLMs. This work lacks the "flow state" and sense of agency that defines human professional pride. Instead of solving a problem, the human is now a "verifier" for a machine that can produce "B+" work instantly. This leads to Cognitive Atrophy—a decline in critical thinking and original problem-solving as we become over-reliant on the machine’s first draft.

Ultimately, the Great Cognitive Displacement is forcing a radical revaluation of human labor. If "Productivity" (the volume of output) is now the domain of the machine, then human value must shift toward Presence and Accountability. We are seeing the emergence of a "Human-Premium" market. In 2026, a "Human-Certified" legal defense or a "Human-Witnessed" journalistic report carries a higher moral and financial weight because a human is willing to stake their reputation—and their legal liability—on the result. The Great Cognitive Displacement is not just a threat; it is a filter, forcing us to strip away the "automatable" parts of our jobs to find the small, uncomputable core of what it actually means to be a professional.

In the early 20th century, Frederick Taylor revolutionized the factory by breaking down physical tasks into timed, optimized movements. In the AI era, we are witnessing the rise of Algorithmic Taylorism. Algorithmic Taylorism is the 21st-century evolution of "Scientific Management," a theory developed by Frederick Taylor in the early 1910s. While the original Taylorism used a stopwatch and a clipboard to break down physical factory labor into timed, repetitive, and "optimized" motions, Algorithmic Taylorism applies that same cold logic to the human mind. In the year 2026, this is no longer just about tracking how many widgets a worker produces; it is about the granular, real-time quantification of thought, creativity, and professional judgment.

The core philosophy of Taylorism was that there is "one best way" to perform a task, and that workers should not be allowed to deviate from it (which I do not agree with). In the cognitive age, AI has become the ultimate "Efficiency Consultant." By analyzing the "digital exhaust" of millions of successful projects, AI defines the "optimal" way to write a line of code, draft a legal motion, or design a marketing campaign. The professional is no longer encouraged to find their own "flow" or unique methodology. Instead, they are nudged by the algorithm to follow a standardized, "statistically successful" path. This is the Commodification of Style, when every output must meet an algorithmic "optimization score," individual creativity is seen as a "noise" to be filtered out.

Algorithmic Taylorism relies on a level of surveillance that would have been physically impossible in the 20th century. In 2026, white-collar "Performance Management" software uses AI to track. It records your Active Seconds, not just when you are logged in, but the millisecond-by-millisecond rhythm of your keystrokes and mouse movements. Sentiment Analysis scans internal communications (Slack, Teams, Email) to measure your "collaboration score" or "burnout risk" based on the tone of your language. The Attention Metrics uses eye-tracking through webcams to ensure "focus" during deep-work blocks or meetings.

This creates a high-pressure environment where "busyness" is confused with "productivity." Workers begin to "perform for the algorithm," adopting behaviors that look good on a dashboard—like rapid-fire emailing—rather than engaging in the slow, deep thinking required for true innovation. The psychological cost of this management style is a profound sense of alienation. In traditional Taylorism, the worker was an extension of the machine. In Algorithmic Taylorism, the professional is an extension of the data set. When a junior doctor is forced to follow an AI’s diagnostic checklist rather than their own intuition—or when a writer is told to "pivot" their narrative because a predictive model says it will increase "engagement"—the "intrinsic joy" of the craft is hollowed out. The human becomes a "Compute Unit," a biological processor tasked with executing the machine's "B+" first draft.

Algorithmic Taylorism relies on a level of surveillance that would have been physically impossible in the 20th century. In 2026, white-collar "Performance Management" software uses AI to track. This is the application of "optimization logic" to the human mind. Through Algorithmic Management, white-collar work is being "Uber-ized." AI systems now monitor "active seconds" on keyboards, analyze the "sentiment" of internal emails, and set "velocity targets" for creative output. The human is no longer a professional with autonomy; they are a "compute unit" in a larger system. Many high-level professionals now find their roles shifted from creation to curation. They have become "AI Janitors," spending their days cleaning up the "hallucinations" and "stochastic errors" of a model that was trained to replace them. This work is cognitively draining but emotionally unrewarding, leading to a profound crisis of Alienated Labor.

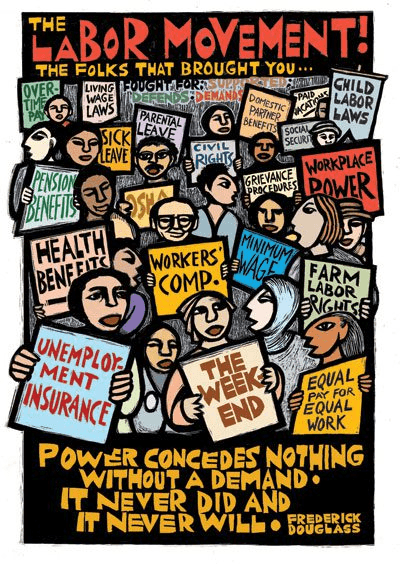

As we previously upon in our look at Data Sovereignty, there is a deeply parasitic relationship at the heart of the Labor Trap. AI models are trained on the "intellectual exhaust" of the very people they are designed to displace. A translator who spends twenty years mastering the nuances of Mandarin is inadvertently "donating" their life’s work to the model that will eventually make their role redundant. This is the ultimate extraction: the machine "eats" the human’s past brilliance to automate their future obsolescence. Without a new legal framework for Collective Data Rights, the "Cognitive Middle Class" is essentially being asked to build their own digital executioner. In 2026, the battle for labor rights has shifted from the "factory floor" to the "training set."

The Labor Trap is also an engine of extreme wealth inequality. In a traditional economy, a firm's growth required hiring more people, spreading wealth through wages. In an AI-driven economy, growth is decoupled from headcount. A company can scale its "output" by simply buying more "Compute" (processing power). This creates a Capital-Labor Imbalance. The rewards of AI-driven productivity are not flowing to the workers who provided the training data or the "Human-in-the-Loop" operators; they are flowing to the owners of the infrastructure—the "Compute Sovereigns." We are moving toward a "Winner-Take-All" economy where a few thousand engineers and server-farm owners can generate the economic output of a million-person workforce, leaving the rest of the cognitive middle class to compete for a shrinking pool of "uncomputable" service jobs.

Beneath the sleek interface of modern AI lies a massive, invisible workforce of "Ghost Workers." These are millions of low-paid contractors in the Global South who spend their days "labeling" data, filtering out toxic content, and "RLHF-ing" (Reinforcement Learning from Human Feedback) the models to make them sound human. The ethical trap here is twofold. First, these workers are often exposed to the most traumatic content the internet has to offer to ensure the AI remains "safe" for Western users. Second, they are the "mechanical heart" that gives the illusion of AI "intelligence." By hiding this human labor, tech companies maintain the myth of the "Autonomous Machine," allowing them to avoid the labor protections and minimum wage standards that a human workforce would require. The "Ghost in the Code" isn't just a metaphor; it's a person in a digital sweatshop.

To escape the Labor Trap, we must redefine what we value in work. If the machine can produce a "B+" legal brief or a "good enough" news article in seconds, the value of human labor must shift from Product to Process and Presence. We are seeing the rise of an "Artisanal" movement in cognitive labor. Much like the Arts and Crafts movement responded to the Industrial Revolution, 2026 is seeing a demand for "Human-Certified" work. This is labor that carries the "Moral Weight" of a human witness—work where a person’s reputation, empathy, and ethical judgment are on the line. The goal is not to "compete" with the machine’s speed, but to offer the one thing the machine cannot: Accountability.

The Labor Trap is a choice. We can choose to use AI as a tool that enhances human capability, or we can use it as a replacement that hollows out the human spirit. To live ethically in the age of the "Silicon Hand," we must demand more than just "job retraining." We need a new social contract that includes compensating people for the use of their intellectual labor in training sets, limited "Algorithmic Taylorism" of the workplace, and ensuring that in critical sectors like health, law, and education, citizens have a right to interact with a human being who is legally and morally accountable. AI is the most powerful "leverage" we have ever created. But if that leverage is used only to lift profits while crushing the "Cognitive Middle Class," we will find ourselves in a world that is perfectly optimized and utterly uninhabitable. We must ensure that as we build the machines of the future, we do not forget the people who taught them how to think. ☀️