Created on

Updated on

Filmmaking in the Age of AI(31): Industrial Light and Magic

literally magic

Preface: Co-written with Claude.

Industrial Light and Magic

The Van Nuys warehouse where ILM was founded in 1975 was chaos from the beginning. Dykstra had assembled a team of young, largely self-taught technicians — people who had never worked on a feature film before, who were building technology nobody had built before, in a facility that was essentially a converted industrial space with no air conditioning in the San Fernando Valley heat. The culture was not what Lucas expected or wanted. Dykstra ran the place loosely — there were parties, there were dogs wandering the floor, there were people who showed up when they felt like it. Progress was slow. The Dykstraflex was being built and rebuilt as problems emerged. Months passed and the shot count wasn't materializing. Lucas visited periodically from England during production and was increasingly alarmed. He was paying for a facility that wasn't producing. By mid-1976 the relationship between Lucas and Dykstra was severely strained. Lucas felt Dykstra was more interested in developing the technology than in delivering the shots the film needed. Dykstra felt Lucas didn't understand how long it took to build something that had never existed before. Both were right. That didn't resolve the problem. When principal photography wrapped and Lucas returned to California, he essentially took over supervision of ILM directly. He pushed the team relentlessly through the final months of 1976 and into 1977, demanding shots, rejecting work that wasn't good enough, driving toward the May release date that Fox had locked in. The team delivered. Barely, and not without some shots being incomplete on opening day, but they delivered.

Beyond the Dykstraflex itself, the broader achievement was creating an entire compositing pipeline from scratch(bro). The optical printer work required to combine the separately filmed elements — spacecraft models, background starfields, laser blasts, explosions — was done at a level of precision and volume that hadn't been attempted before. Star Wars had 365 optical effects shots — a volume of composite work far beyond anything previously attempted on a single feature film.Every shot required multiple passes. Every pass had to be precisely aligned. The optical printing process had to be executed with enough consistency that the composited elements would feel like they existed in the same physical space. At 365 shots the margin for error was essentially zero — one badly composited sequence could break the illusion the entire film was building. The team developed new techniques as they went. Methods for reducing the matte lines visible around composited spacecraft. Ways of adding motion blur to models that were filmed in controlled stop-motion conditions. Approaches to creating the sense of depth and scale in space environments that had no natural depth cues.

After the film's success Lucas restructured ILM completely. Dykstra left — the relationship never fully recovered — and Lucas moved the facility from Van Nuys to San Rafael in Marin County, near his Skywalker Ranch property. He brought in new leadership and began building ILM into a permanent institution rather than a production-specific facility. The decision to make ILM a standalone company rather than dissolving it after Star Wars was one of the most consequential business decisions in Hollywood history. Lucas essentially created the first modern VFX studio — a facility that existed independently of any single production and could hire out its capabilities to other filmmakers. The Empire Strikes Back, Raiders of the Lost Ark, E.T., Indiana Jones and the Temple of Doom, Young Sherlock Holmes — the first film to feature a fully computer generated character — The Abyss, Terminator 2, Jurassic Park. ILM's filmography through the 1980s and early 1990s is essentially a catalogue of every major VFX breakthrough of the era (yessss).

Almost every significant technique developed during that period came out of that San Rafael facility. The CGI water creature in The Abyss. The T-1000's liquid metal morphing in Terminator 2. The dinosaurs in Jurassic Park. These weren't separate developments happening independently — they were a continuous research and development process sustained by a permanent institution with institutional memory, accumulated expertise, and the commercial infrastructure to fund ongoing experimentation. That's ILM's deepest legacy. Not any single film or technique, but the model it established — that VFX capability needed to be housed in permanent institutions with research cultures, not assembled and dissolved production by production. Weta Digital in New Zealand, Digital Domain, Framestore, Method Studios — every major VFX house that came after is operating on the institutional model ILM proved was viable.

The VFX industry ILM helped create has a darker side worth naming. The same institutional model that enabled extraordinary work also created an industry with notoriously poor labor conditions. VFX workers are among the least protected in Hollywood — historically non-unionized, frequently working brutal hours under impossible deadlines, often on projects where the budget has been squeezed to the point where the timeline is physically unrealistic. The irony is that the techniques ILM developed made VFX so apparently seamless that studios began treating it as a commodity — something that could be outsourced to the lowest bidder globally, with the expectation that the quality would be identical regardless of the conditions under which the work was produced. The craft that ILM built into an art form became, in the hands of the industry it enabled, a line item to be minimized. Rhythm & Hues — the VFX house that won the Academy Award for Life of Pi in 2013 — filed for bankruptcy two weeks before the ceremony. The visual effects supervisor accepting the award tried to speak about the industry's labor conditions and was played off by the orchestra. That moment crystallized something that had been building for decades — the gap between the cultural prestige of VFX work and the economic reality of the people doing it. ILM itself has remained relatively stable under Lucasfilm and then Disney ownership. But the industry it helped create has not solved the problem it inherited.

The profit participation Lucas negotiated for himself in the Star Wars deal with Fox — famously surrendering his director's fee in exchange for merchandising rights and sequel rights — is the most discussed financial decision in Hollywood history. But his ownership of ILM was equally significant. ILM was a revenue-generating asset independent of any film's performance. It gave Lucas financial stability that didn't depend on his next film succeeding. It gave him leverage over studios that needed ILM's capabilities. And it gave him the ability to develop technology for his own subsequent films — the prequel trilogy's extensive CGI work, the digital filmmaking techniques pioneered on those productions — without depending on outside vendors. Whether that independence served his filmmaking is a separate question. The prequels are their own complicated story. But the institutional and financial logic was sound, and it came directly from the decision to keep ILM alive after Star Wars rather than dissolving it.

Motion Control

The problem Lucas was trying to solve came directly from the World War II aerial combat footage he had been studying. He had been watching dogfight footage — actual gun camera film from WWII, the kind shot from the nose of a fighter plane during combat — and that's what he wanted Star Wars to feel like. Fast, disorienting, physically immediate. The camera in the middle of the action, not observing it from a safe distance. The way conventional model photography worked at the time was simple and limiting. You put a model on a stand. You put a camera on a tripod. You lit the model. You shot it. The camera didn't move — or if it did, it moved slowly and simply, operated by hand. The resulting footage looked like what it was: a static or slowly moving camera observing a stationary object. For 2001 this was fine. Kubrick wanted slow, deliberate, almost architectural space sequences. The stillness was appropriate to the film's tone and argument.

For Star Wars it was completely wrong. Lucas needed the camera to move through space with the same physical energy as a fighter pilot — banking, diving, accelerating, following ships through turns. And he needed that camera movement to be repeatable. Exactly repeatable. Because every element in the final composite — the ship model, the background starfield, the laser blasts, the explosions — had to be filmed separately and combined later. If the camera moved differently on each pass, the elements wouldn't line up. That's the core problem motion control solved. How do you move a camera through a complex path and then repeat that exact path identically, as many times as you need, with enough precision that separately filmed elements will composite together seamlessly?

The Dykstraflex was a camera mounted on a computer-controlled rig with multiple axes of movement. It could move forward and backward along a track. It could pan left and right. It could tilt up and down. It could roll. It could boom up and down. Each axis was controlled by a separate motor, and each motor was connected to a computer that recorded the exact position of that axis at every moment during a camera move. Once a move was programmed and recorded, the computer could play it back as many times as needed with the motors reproducing the exact same positions at the exact same speeds. The repeatability wasn't approximate — it was precise enough that separately filmed elements would align within a fraction of a millimeter in the final composite. The models themselves were stationary during filming — it was the camera that moved around them. This inverted the conventional approach where models were moved past a stationary camera. Moving the camera rather than the model gave much more precise control over the spatial relationship between the camera and the subject, and made the repeatability problem much more tractable. Because every camera pass followed exactly the same programmed path, every element existed in exactly the same spatial relationship to every other element when they were combined in the optical printer. The X-Wing and the trench wall and the laser blast all behaved as if they occupied the same physical space because the camera had moved through that space identically for each one.

The computer system Dykstra built to control the rig was primitive by any subsequent standard — this was 1975, consumer computing barely existed — but it was sufficient for the task. The programming was done in machine code. The motors were industrial servo motors adapted from other applications. The whole system was essentially handbuilt from components that had never been combined this way before. One of the practical consequences of building the system from scratch was that it could be modified as problems emerged. When a particular shot required a camera movement that the rig couldn't perform in its current configuration, the team rebuilt the relevant components. The Dykstraflex that finished Star Wars was substantially different from the one that started production — it had been continuously refined and extended throughout the process.

Motion control became a standard tool of VFX production almost immediately after Star Wars. The technology was refined and commercialized throughout the late 1970s and 1980s. By the time of the Star Wars sequels it was a known quantity rather than an experimental system. The deeper legacy is conceptual. Motion control established the principle that the camera's movement through space could be treated as data — recorded, stored, replayed, modified. That principle is the direct ancestor of the virtual camera systems used in modern CGI production. When a director on a contemporary film moves a virtual camera through a digitally rendered environment, they're operating on the same conceptual foundation Dykstra built in a Van Nuys warehouse in 1975. The camera move as a reproducible, modifiable piece of information rather than a physical gesture made once and gone.

Optical Printing Pipeline

The pipeline is where the real industrial achievement of Star Wars lives — because the Dykstraflex solved the repeatability problem, but it didn't solve the combination problem. You now had all these separately filmed elements — ships, backgrounds, laser blasts, explosions — sitting on individual strips of film. Getting them onto a single strip of film, 365 times, without the seams showing, was a completely separate mountain to climb. As mentioned previously, we talked about optical printers in the context of the Williams process on Kong. The basic principle is the same — a projector and a camera facing each other, building a composite image by running multiple film strips through in sequence. But the optical printer ILM built for Star Wars — designed by a technician named Don Trumbull, Douglas Trumbull's father — was orders of magnitude more sophisticated than anything that had existed before. The VistaVision format was the first critical decision. Standard 35mm film runs through the camera vertically — each frame is roughly 18mm by 24mm. VistaVision runs the film horizontally through the camera, so each frame is 24mm by 36mm — a much larger image area. ILM shot all the model photography in VistaVision specifically because the larger frame meant more image information, which meant more latitude for optical printing without the image degrading visibly.

Every time you make an optical print — project one strip of film and photograph it onto another — you lose a generation of image quality. The new strip is slightly softer, slightly grainier than the original. A simple shot with one layer of compositing loses one generation. A complex shot with six or seven layers of compositing loses six or seven generations. By the seventh generation on standard 35mm the image would be noticeably degraded — soft, grainy, obviously a composite. The VistaVision format bought ILM extra headroom. Because they were starting with a larger, higher quality image, they could afford to lose more generations before the degradation became visible. Complex shots that would have looked obviously composite on standard 35mm held their quality through the optical printing process.

The single biggest technical challenge in the optical printing pipeline was matte lines — the visible edge that appeared around composited elements where the matte that blocked the background didn't perfectly match the shape of the foreground element. In the Kong era this was accepted as an artifact of the process. In Star Wars it was unacceptable — the film was moving too fast and the composites were too numerous for visible matte lines to be hidden by motion or distance. The ILM team developed several approaches to reducing matte line visibility. The blue screen process — filming models against a pure blue backing so that the blue could be used to generate a precise matte of the model's silhouette — was refined to a level of precision that hadn't been achieved before. The blue had to be an exact specific shade. The lighting on the model had to be controlled so that no blue light spilled onto the model itself — if it did, parts of the model would be treated as background and would disappear in the composite. The matte had to be generated at the correct density so its edge precisely followed the model's outline without either cutting into the model or leaving a visible fringe of blue around it. Even with all of this, some matte line was usually present. The team developed optical techniques for softening the matte edge slightly — enough to make it less visible without blurring the composite in a way that looked wrong.

The shot count was a problem. 365 shots. Each shot requiring multiple passes through the optical printer. Each pass requiring the previous passes to have been executed correctly or the whole composite would fail. The sheer logistics of tracking 365 shots through a multi-stage pipeline — knowing which stage each shot was at, which passes had been completed, which elements still needed to be filmed — was an organizational problem as much as a technical one. ILM developed a shot tracking system — essentially a large physical board with cards representing each shot and its status at each stage of the pipeline(now we have shotgrid). Every morning the supervisors could see at a glance where every shot in the film stood, what was blocking it, what needed to happen next. This sounds mundane but it was a genuine innovation in production management. No VFX facility had needed to track this volume of shots simultaneously before, and the organizational systems for doing it had to be invented alongside the technical ones. The pressure in the final months before delivery was extreme. Shots were being finished and delivered to the optical printer while other shots were still being filmed. The pipeline had to run continuously — stopping it to troubleshoot a problem in one area meant backing up everything downstream. The team worked around the clock in shifts during the final push.

Despite everything, some shots weren't finished when the prints had to be locked for the May 25 opening. A small number of theaters on opening day received prints with placeholder shots — rough composites or even simply black frames where effects shots hadn't been completed. These were replaced within days as ILM finished the remaining work and new prints were struck and distributed. The fact that this happened with only a handful of shots, in a film with 365 effects sequences, after building an entire facility and pipeline from scratch in roughly eighteen months, is actually a remarkable achievement rather than a failure. The margin by which ILM delivered was terrifyingly thin. But they delivered.

The organizational and technical systems ILM built for Star Wars became the foundation for every subsequent production. The shot tracking methodology, the VistaVision approach to maintaining image quality through multiple generations, the blue screen refinements, the optical printer design — all of it carried forward into The Empire Strikes Back, Raiders of the Lost Ark, and every major production ILM worked on through the 1980s. The pipeline also became a template that other facilities adopted and modified. The modern VFX pipeline — the organizational and technical infrastructure through which a contemporary film's hundreds or thousands of effects shots flow from concept through delivery — is a direct descendant of what ILM built in Van Nuys in 1975 and refined in San Rafael through the decade that followed. The shift to digital compositing in the late 1980s and early 1990s changed the specific tools completely. But the underlying logic — breaking a complex composite into separately filmed or rendered elements, combining them in a controlled pipeline with systematic quality checks at each stage, tracking the status of hundreds of shots simultaneously through a multi-stage process — that logic came from Star Wars.

Model Work

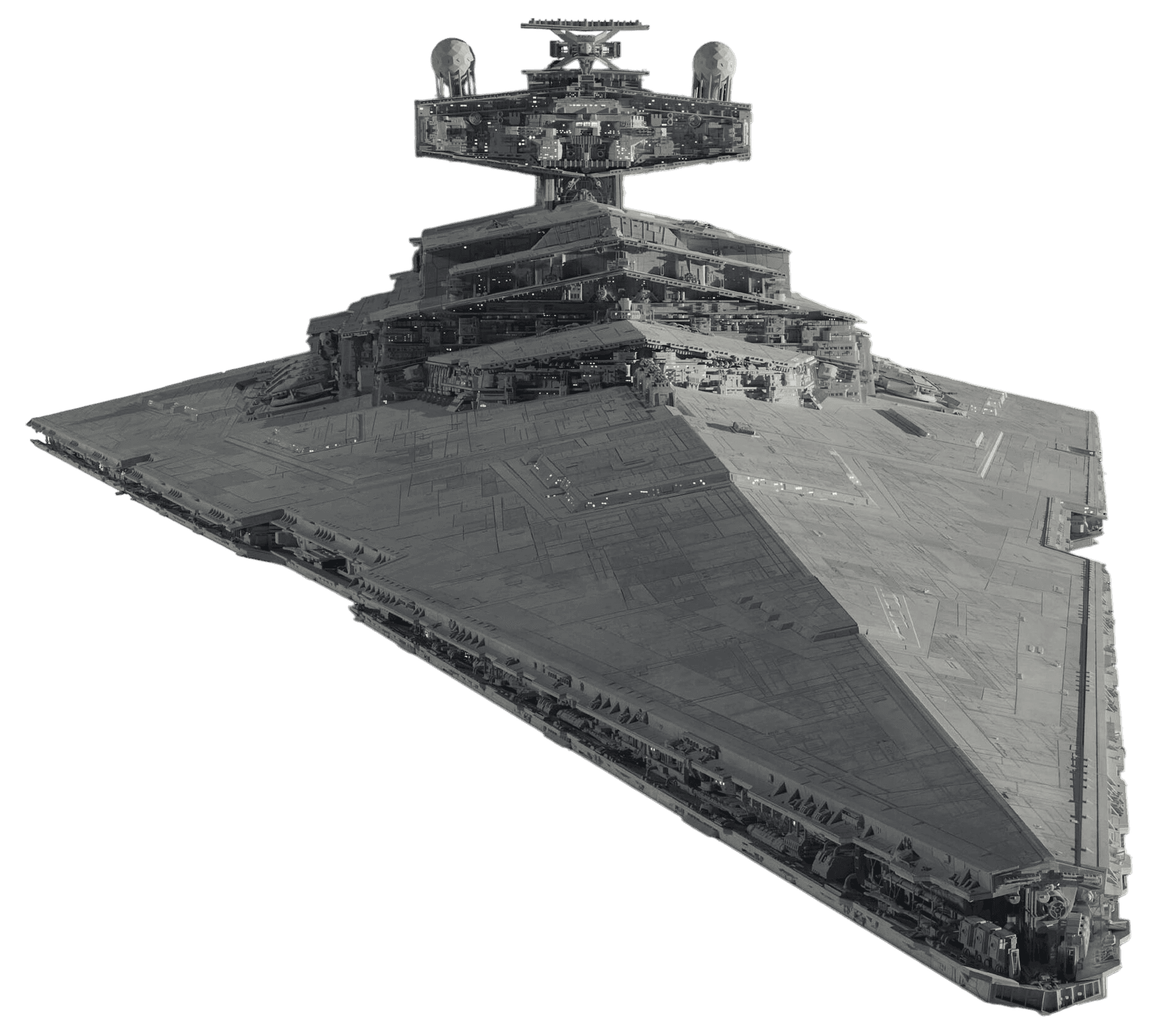

The model work on Star Wars is one of the great unsung achievements of the film — because it worked so well that nobody noticed it, which was entirely the point. The fundamental challenge was scale. Lucas wanted the ships in Star Wars to feel enormous. Not just large — physically, overwhelmingly massive. The Star Destroyer that opens the film, chasing the Rebel Blockade Runner across the frame, needed to feel like something the size of a city. The Death Star needed to feel like a small moon. Creating that sense of scale with physical models required solving a problem that sounds simple and isn't. How do you make a model that's four feet long look like something that's several miles long? The answer ILM developed was surface complexity and the moving camera.

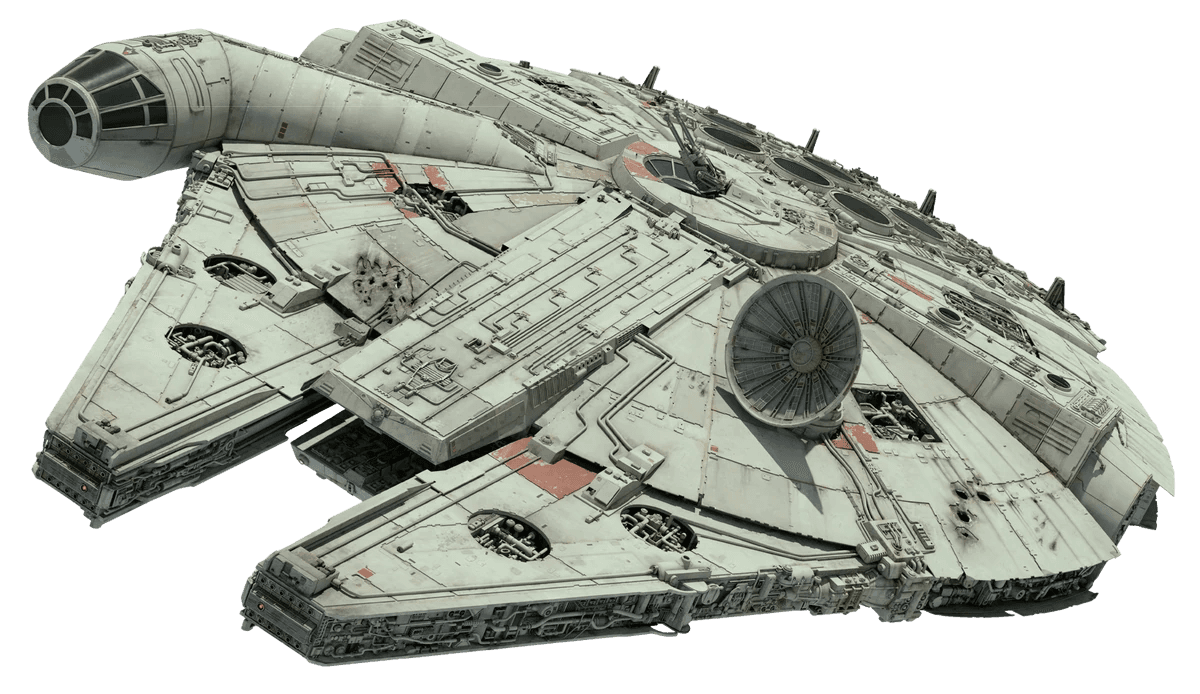

The conventional approach to model building in science fiction films before Star Wars was clean surfaces. Smooth hulls, simple geometries, shapes that read clearly at whatever scale they were being filmed. The models in 2001 are beautiful examples of this — elegant, precise, architecturally coherent. They look exactly like what they are: carefully designed objects. The ILM model makers — led by Grant McCune — took a completely different approach. They covered every surface of every ship model with the maximum possible amount of detail — panels, conduits, vents, machinery, irregular surface textures, recesses, protrusions. The surface of an X-Wing or a Star Destroyer, examined closely, is almost impossibly complex. There is detail on top of detail on top of detail, with no large smooth areas at any scale.

The reason this creates the illusion of size is how human perception calibrates scale. When we look at a real large object — a building, a ship, an aircraft carrier — we expect to see surface complexity that exceeds our ability to take it all in at once. The larger the object, the more detail it has, and the more that detail recedes into illegibility at normal viewing distances. When a model has that same quality — detail that keeps revealing itself the closer you look, detail that you can't fully resolve at the scale you're viewing it — your perceptual system interprets it as large. Clean smooth models look small regardless of how they're filmed because real objects that size wouldn't be smooth. The surface complexity was a deliberate perceptual trick.

The model makers developed a technique called kit bashing — taking commercially available plastic model kits and using their components as surface detail on the ship models. Tanks, cars, aircraft, ships — hundreds of different kits were purchased, disassembled, and their parts were applied to the Star Wars models as surface texture. The result was that the surface detail on the Star Wars ships has an almost fractal quality — at any level of magnification there is recognizable mechanical complexity, because the components being used as detail were themselves detailed objects designed to represent real machinery. A section of hull that from a distance looks like an abstract mechanical surface, examined closely, reveals components that look like they could be actual aircraft parts or engine components. This wasn't just visually effective — it was also practical. Designing and fabricating all that surface detail from scratch would have been impossibly expensive and time-consuming. Kit bashing allowed the model makers to achieve extraordinary surface complexity at a fraction of the cost, in a fraction of the time.

The Millennium Falcon is the most technically complex model built for the film and the one that most clearly demonstrates the scale illusion working at full capacity. The main shooting model was about four feet in diameter. It was built with surface detail derived from hundreds of kit components, with internal lighting systems that illuminated the cockpit and various hull sections, with landing gear that could be extended and retracted, with multiple attachment points for the motion control rig so it could be filmed from different orientations without visible mounting hardware. There were multiple Falcon models of different sizes for different shots. A larger model was used for close-up detail shots where the main model would have been too small to reveal the necessary surface complexity. A smaller model was used for wide shots where the main model would have been too large to fit in the frame at the correct distance from camera. The consistency between these different sized models — making sure the surface detail matched well enough that cuts between them weren't visible — required careful coordination between the model makers and the camera team. Each model had to be filmed at the correct distance and with the correct lens to produce matching apparent scales in the final footage.

The opening shot of the film — the Star Destroyer pursuing the Blockade Runner — was specifically designed to establish the scale language the entire film would operate in. The shot starts with the Blockade Runner crossing the frame from left to right, the camera tilting up to follow it. Then the Star Destroyer enters frame from the top — and keeps coming. And keeps coming. The ship fills more and more of the frame until it dominates everything, and it's still moving, still revealing more of itself, until finally its engines fill the entire frame and the shot ends.

The Star Destroyer model for this shot was approximately five feet long. The Blockade Runner model was about two feet long. The sense of the Star Destroyer being perhaps fifty times the size of the Blockade Runner was created entirely through the timing of the camera move, the scale relationship between the two models in the frame, and the surface complexity of the Star Destroyer that made it read as something that couldn't be fully taken in. Lucas and the camera team shot this sequence multiple times with different timings for the Star Destroyer's entry and transit across frame. The version in the finished film holds on the Star Destroyer longer than any of the earlier tests — Lucas kept pushing for more time, more ship, more scale. The audience needed to feel overwhelmed, not just impressed.

The Death Star trench run environment was a large miniature — a section of the Death Star surface built at a scale that allowed the X-Wing models to be filmed moving through it at camera speeds that produced convincing motion blur. The surface detail on the Death Star miniature was even more dense than on the ships — the trench walls were covered with the same kit-bashed complexity, but now extending in all directions simultaneously, creating the sense of an environment rather than a single object. When the camera moved through the trench the detail in the walls rushed past in a continuous blur of mechanical complexity that reinforced the sense of enormous scale and speed. Lighting the trench environment was particularly challenging. The trench had to be lit in a way that was consistent with the exterior lighting of the Death Star established in other shots, while also revealing enough of the trench wall detail to read as a complex physical environment. Too dark and the walls became featureless. Too bright and the models of the X-Wings looked obviously like models against an obviously lit miniature set. The lighting was done with small, carefully positioned lights that could be hidden within the trench geometry or positioned just outside the camera's field of view. The cinematography of the model work — handled largely by Richard Edlund, who became one of ILM's most important technical figures — required the same kind of precise, controlled approach as any other cinematography, applied to objects that were inches rather than feet in size.

The approach ILM developed for Star Wars — surface complexity, kit bashing, multiple scales of model for different shot types, precise coordination between model makers and camera team — became the standard methodology for practical model VFX through the 1980s and into the 1990s. The Return of the Jedi, The Empire Strikes Back, the original Battlestar Galactica television series, countless other productions adopted the same vocabulary. The kit bashing technique in particular became ubiquitous — it was cheap, fast, and produced results that were visually convincing in a way that cleaner, purpose-built model surfaces couldn't match. The technique was eventually superseded by CGI environments and vehicles — Jurassic Park in 1993 began the transition, and by the late 1990s digital ships and environments were replacing physical models on most major productions. But the visual language that CGI science fiction inherited — the surface complexity, the sense of overwhelming scale, the mechanical density of spacecraft and environments — came directly from the physical model work ILM pioneered on Star Wars. The kit-bashed surface detail that Grant McCune applied to the Star Destroyer hull with pieces of tank and aircraft models in 1976 is the direct ancestor of the procedurally generated surface complexity that renders on a computer in seconds today. ☀️

REFERENCE LIST

Wikipedia, Industrial Light & Magic — en.wikipedia.org/wiki/Industrial_Light_%26_Magic

ILM.com, "ILM's Audacious Start in an Empty Warehouse Began 50 Years Ago" — ilm.com (May 28, 2025)

Wikipedia, John Dykstra — en.wikipedia.org/wiki/John_Dykstra

Wikipedia, Rhythm and Hues Studios — en.wikipedia.org/wiki/Rhythm_and_Hues_Studios

Wikipedia, Young Sherlock Holmes — en.wikipedia.org/wiki/Young_Sherlock_Holmes

Wikipedia, Douglas Trumbull — en.wikipedia.org/wiki/Douglas_Trumbull

Memory Alpha, Don Trumbull — memory-alpha.fandom.com/wiki/Don_Trumbull

American Cinematographer (ASC), "Star Wars: Miniature and Mechanical Special Effects" (Dykstra's own account) — theasc.com

The Academy / Medium, "The Force Behind the Original Star Wars Magic: VFX Legend Richard Edlund" — medium.com/art-science

Deadline, "Oscars: Life of Pi VFX Winner Played Off While Thanking Bankrupt Rhythm & Hues" — deadline.com (Feb. 25, 2013)

Deadline, "Robert Blalack Dead: Star Wars Visual Effects Artist & ILM Founder" — deadline.com (Feb. 2022)

Wikipedia, Robert Blalack — en.wikipedia.org/wiki/Robert_Blalack

Invention & Technology Magazine, "Star Wizards" — inventionandtech.com

VFX Voice, "Star Wars: A Force for Innovation" — vfxvoice.com

L.A. Taco, "How a Van Nuys Warehouse Became Part of the Star Wars Galaxy" — lataco.com (April 2023)

Lucasfilm.com, "The ILM Dykstraflex" — lucasfilm.com

Snopes, "What Was the First Full CGI Character?" — snopes.com

Hollywood Reporter, "Oscars 2013: VFX Artists Blast 'Disgraceful' TV Moments" — hollywoodreporter.com (Feb. 25, 2013)

SlashFilm, "A Forgotten Sherlock Holmes Flop Gave Us The First Fully CGI Character" — slashfilm.com (Jan. 2026)