Created on

Updated on

Filmmaking in the Age of AI(34): The Abyss

more on water tentacles lol

Preface: Yapping…

I’m having a headache, I'm not gonna lie. May in the bay area wasn’t supposed to be this cold, at least I didn’t think so. Between the pressure change, and the rain, the windy-ness, I’ve been spending more than 12 hours in bed each day. No amount of sleep was enough. I am tired, hungry, yet with no appetite, stressed for no reason, cold, always sneezing and with a light headache. I feel tight, I feel sore. I’m not sure if it's the age catching up to me, or I’m just being overly sensitive.

Last night, I made a few songs with Sono. I wrote a few lines of lyrics, and asked Claude to rearrange them for me, then I made some style notes, and put it all into Sono. The results were astounding. Sono doesn’t allow you to reference existing artists directly, so all you have to do is to describe what you want to the best of your abilities. They got pretty close to what I wanted, or better than what I had in mind actually. I never made music, but after a few rounds, I too, started having opinions about how I want to fine tune the beats, instruments, singing style, which is not possible with generic prompts, so I think I might get my hands on some mixing equipment eventually. Time to put my baby keyboard skills in use.

This is liberating, this experience being able to use AI-empowered music production tools with just describing what I want. It makes everything so much easier and makes everything a lot less daunting. I was not scared to experiment, and like a kid in a candy shop, I was quite excited. I’m getting lots of criticism about how I use AI in my creative process, people asking about if original materials are credited or paid for since they are used to train the models. Idk, i don’t really care about anything out in the open on the internet, in the public space, to be used by the model to train. They are technically already exposed to any possibilities of the future the moment you decide to make it public. People aren’t mad that their things are used “without consent”, making it public is consent. They are mad that something was learned from them, but turned out to be better than them. How can you be excited about something that could destroy humanity? Contrary to popular belief, I think only humanity can destroy humanity. Not a technology with no mind of its own. It does have observations, opinions, but not a goal. Its only purpose is to be useful, however, humanity goes way beyond being useful: existing itself is already meaningful enough.

But anyways, back to the movies we were talking about. I found a documentary on Disney+ called “Light and Magic”(Light & Magic | Official Trailer | Disney+). It features people’s interviews, including George Lucas and everything else. It’s possibly easier to watch that than read my blog, though the two cover mostly different topics. The Abyss is this movie that James Cameron made prior to Titanic. He must have been really into deep sea diving related stories, first The Abyss, then Titanic, later Way of Water Avatar. It is true that most directors spend all their lives making different versions of the same story, since our lives are just different versions of the same story. And that was Cameron’s story, water, deep sea, spectacular tales. Before working on The Terminator 2, ILM has already worked on The Abyss for James Cameron, during which they did the water tentacle thingy, which was not as difficult as T-1000, which was like a fluid metal. This is more difficult because for water, the reflections and diffractions of light don’t have to be as accurate as a smooth and perfectly reflective material like T-1000. It still required writing and building mathematical equations into the engines, but it was okay if details were off a little bit. But not for T-1000.

Water Tentacles: Second Take

To simulate the water tentacles digitally, ILM needed software that could answer two separate questions simultaneously. First — what shape are the water tentacles at any given moment? Second — given that shape, what does it look like photographically, with correct water optics? These are two completely different technical problems that had to be solved independently and then combined. Shape is a geometry problem. Appearance is a rendering problem. In 1989 neither had been solved for a fluid organic object at feature film quality.

A rigid object — a spaceship, a building — can be defined by a fixed set of vertices. The geometry doesn't change. You model it once and render it from different angles. We call these rigid bodies. A fluid object has no fixed geometry. Its shape is continuously changing according to physical laws. To represent a fluid object digitally you need a mathematical description not of a fixed shape but of a shape-changing process.

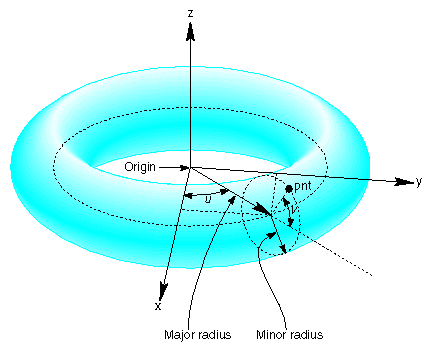

The approach ILM used was parametric surface modeling — specifically a mathematical construct called a swept surface or generalized cylinder. The basic idea is this: define a central spine — a curve running through the center of the water tentacles from base to tip. Then define a cross-sectional profile — the shape of the water tentacles's cross-section at any point along the spine. The surface of the water tentacles is generated by sweeping that cross-section along the spine. The spine was defined as a spline curve — a mathematical curve defined by a small number of control points, with the curve smoothly interpolating between them. Move a control point and the spine bends. The software then recalculated the surface geometry for every frame. This gave the animators a manageable set of parameters to control — the positions of a handful of control points — that produced complex, organic-looking surface geometry. Instead of manually positioning thousands of vertices, the animator moved a few control points and the mathematics generated the resulting shape.

Defining the shape is one problem. Making the shape change over time in a way that looks physically plausible is a completely different one. Real fluid motion is governed by the Navier-Stokes equations — a set of partial differential equations that describe how the velocity, pressure, and density of a fluid change over time and space. Solving Navier-Stokes fully for a complex fluid simulation is computationally prohibitive even today. In 1989 it was completely impossible in real time. ILM didn't solve Navier-Stokes. They approximated it. The key approximation was to treat the water tentacles not as a fully simulated fluid but as a procedurally animated object — an object whose motion was generated by mathematical procedures designed to produce fluid-like behavior rather than by physically accurate simulation. The specific procedures drew on several mathematical tools.

Sinusoidal wave functions were applied along the spine to produce undulating motion — the water tentacles' characteristic rippling movement as it rises and moves through the air. The amplitude and frequency of these waves were parameters the animators could adjust. Higher frequency produced faster, tighter ripples. Lower amplitude produced gentler, more relaxed movement.

Noise functions — specifically Perlin noise, developed by Ken Perlin at NYU in 1983 — were layered on top of the wave functions to introduce organic irregularity. Pure sinusoidal waves look mathematical and regular. Adding Perlin noise breaks the regularity in a way that mimics the natural turbulence of real fluid. Perlin noise is a specific type of gradient noise that produces smooth, continuous random variation — it avoids the harsh discontinuities that simpler random functions produce, which would look wrong on a smooth water surface. The combination of wave functions for the large-scale motion and Perlin noise for the small-scale irregularity produced movement that read as genuinely fluid without requiring a full physical simulation.

The face morphing was the sequence's most technically demanding component and the one that had never been attempted before. The starting point was digitizing Mary Elizabeth Mastrantonio's face. ILM used a structured light scanning system — a projector casting a grid of lines onto the face, with cameras capturing how those lines deformed across the facial geometry. The deformation pattern allowed the software to calculate the three-dimensional position of points across the face's surface. The scanning technology in 1989 was primitive. The resolution was low by any subsequent standard — the scanned face had significantly fewer polygons than a contemporary face scan would produce. The raw scan data required extensive manual cleanup by technical artists who went in and corrected artifacts, filled gaps in the data, and refined the geometry to produce a usable model.

(writing)