Created on

Updated on

Filmmaking in the Age of AI(36): on set vfx supervisors

when on set, collect everything you can

Preface: Co-written with Claude.

The set is the only time you have the real world. The actors are there. The practical lights are there. The location exists. Everything after this is reconstruction — digital artists trying to match something they weren't present for, using evidence you either collected or didn't. The on-set VFX supervisor's job is to make sure you leave with everything post-production will need, because once you wrap a location, it's gone.

For any shot where something is going to be added, removed, or replaced digitally, you need a clean plate — a take of the same shot with the same camera move, but with the actors, rigs, or foreground objects removed. If a character is going to be composited against a digital background, you shoot the greenscreen with the actor, then you shoot the empty greenscreen without them. If a rig is holding a stunt performer and needs to be painted out, you shoot a pass without the performer so compositors have the background information behind where the rig was. If you skip the clean plate, the compositor has to reconstruct that background from scratch. That takes longer, costs more, and rarely looks as good.

Anything that's going to have digital elements added to it needs to be trackable. Tracking markers — usually small, high-contrast dots or crosses — get placed on greenscreens, on actor suits, on set walls, on any surface that a digital object needs to be locked to. The matchmove team uses these markers to reconstruct the exact camera and object movement in 3D. If the markers weren't placed, or were placed wrong, or got obscured during the shot, the tracking becomes guesswork. The VFX supervisor decides where the markers go and makes sure they're there before the camera rolls.

As mentioned previously, at every new setup — every time the lights change significantly — a set of reference photographs gets taken. A chrome ball and a grey ball are placed at the position of the actor or digital object, and photographed in HDR, meaning at multiple exposures to capture the full range of light in the environment. These photographs become the lighting reference for every digital element that needs to exist in that setup. When a lighting artist is trying to make a digital creature look like it's standing in the same room as the actor, these are what they use. Without them, digital objects get lit by approximation. Approximation is how you get shots that look like they were rendered, not filmed.

Also mentioned previously, for any location or set that will need to be recreated, extended, or navigated by digital elements, LIDAR scanning records the physical geometry of the space as a point cloud — millions of measured points that together describe the exact three-dimensional shape of the environment. If a digital character needs to cast a shadow on a staircase, or a digital vehicle needs to drive through a location, the geometry of that space needs to exist in the computer. LIDAR is how you get it accurately. Without a scan, artists have to model the environment from photographs, which takes longer and introduces error.

Beyond the primary camera, witness cameras are positioned to record the full context of every shot — what the set looked like, where the actors were standing, how the props were positioned, what the lighting rig looked like. When a compositor is building a shot six months later and something doesn't line up, witness camera footage is what they go back to. They're insurance. Every serious VFX shoot has them running continuously.

When an actor is performing opposite something that doesn't exist yet — a digital creature, a character that will be added in post, an explosion that hasn't been simulated — they need something to look at. The VFX supervisor works with the director and the first AD to make sure a stand-in, a tennis ball on a stick, a mark on a greenscreen, or a crew member with a target is positioned at exactly the right place and height. A wrong eyeline is one of the most expensive things to fix in post. You cannot reconstruct a genuine performance, and nothing breaks the illusion of a digital character faster than an actor whose gaze lands three inches from where the character's face is.

A color chart gets photographed at every new lighting setup alongside the actors and set. This gives the post team an absolute color reference, anchoring the footage to known values so that digital elements can be matched to the same color space accurately. If a digital element has a specific physical size — a creature, a vehicle, a building — something of known dimensions needs to be photographed at the same position in the frame. Usually a crew member with a height marker, or a physical prop. Digital artists working months later need to know how big the thing is supposed to be relative to everything around it. All of the above is the responsibility of an on set VFX supervisor.

Before each shooting day, the VFX supervisor reviews the shot list with the director and the DP. They identify every shot that has VFX implications — not just the obvious digital creature shots, but the background that needs to be extended, the prop that needs to be replaced, the practical effect that needs augmenting. They flag what reference is needed for each one and make sure the crew is ready to capture it. During the shoot, they are watching the monitor on every VFX shot. They are asking whether the greenscreen is evenly lit — uneven lighting creates color spill on the actors and makes pulling a clean key exponentially harder in post. They are checking that tracking markers are visible. They are watching eyelines. They are noting in their shot report everything that happened — which takes were clean, which had problems, what issues to flag for the post team.

They are also the translator between the director's creative vision and what is technically achievable. When a director wants a shot that wasn't in the previs, or wants to change an angle on a VFX-heavy sequence, the VFX supervisor is the person who tells them what that change costs — in time, in money, in complexity — so the director can make an informed decision. That conversation happens in real time, on set, often under pressure. The VFX supervisor has to be direct without shutting down the director's instincts. They maintain the VFX shot report — a running document of every VFX shot in the film, updated daily. Shot number, description, what was captured, what's still needed, any issues. This document is the handoff to post. It follows the footage from set to editorial to the vendors.

And at the end of production, they supervise the pickup shoots — the additional days that happen once the edit is closer to locked and specific gaps in the VFX need to be filled. Pickup days are often the most efficient VFX shooting days on the entire production, because by that point everyone knows exactly what they're missing and why. The on-set VFX supervisor is not a technical consultant who shows up to answer questions. They are responsible for the viability of every VFX shot in the film. If something wasn't captured on set and it needed to be, that is on them. The director and producer need to understand this so they give the VFX supervisor the access, the time, and the authority to do the job properly.

Even on a film where almost everything is digital, there are things you shoot in the real world specifically to be composited into shots later. These are called elements — practical, physical phenomena captured on camera as separate passes, designed to be layered into a shot rather than simulated entirely in software. The most common elements are fire, smoke, explosions, sparks, debris, dust, water splashes, and atmospheric haze. They get shot against black backgrounds — sometimes on the main unit stage, sometimes on a dedicated elements stage — so compositors can isolate them and blend them additively into the image. An additive blend means the black drops out and only the light and color of the element remains, sitting on top of the shot like a layer of reality.

The reason you shoot practical elements even when you have the software to simulate them comes down to one thing: physics. Fire behaves like fire. Smoke moves according to actual fluid dynamics. A real explosion has weight, light, and energy that interacts with the environment around it in ways that are extraordinarily difficult to replicate convincingly in a simulation. When you composite a real fire element into a shot, it reads as real because it is real. The camera captured actual combustion. The light falloff, the color temperature shifts, the unpredictable organic movement — none of that has to be calculated, because it already happened.

On a large production, an elements shoot might run for several days or a week, separate from principal photography. A pyrotechnics team designs and executes controlled explosions, fireballs, smoke pours, and sparks at various scales. Everything gets shot at high frame rates — typically 96 to 120 frames per second — so compositors have slow-motion options that give them more control over timing when they cut the element into a shot. Multiple camera angles cover each element so there are options in post. You're building a library. That library matters. A production with a well-shot elements library gives compositors material they can pull from across hundreds of shots. An explosion that was shot once on the elements stage can end up in a dozen different shots at different scales, angles, and opacities. The investment in an element shoot pays out repeatedly across the entire film.

The opposite of a good elements library is a production that tries to simulate everything and then realizes in post that the simulations aren't convincing enough. At that point you're either reshooting — expensive — or you're trying to source stock elements from third-party libraries, which rarely match the look of the film and rarely have the specific scale and behavior you need. Productions that skip element shoots to save money early often spend more money later trying to fix the gap. There's also a subtler reason to shoot elements on set during principal photography rather than on a separate stage later. When an explosion or a fire practical is shot on the same set, under the same lights, with the same camera, it carries the photographic signature of the film — the same grain, the same color response, the same lens characteristics. That coherence makes compositing easier and the final image more unified. An element shot on a black stage six months later under different conditions requires more work to match, and it shows more often than not. The VFX supervisor and the special effects coordinator work together to plan the element shoot. The VFX supervisor knows what the compositors will need — what sizes, what angles, what behaviors. The special effects coordinator knows what's achievable practically and safely. Together they design the shoot so that what gets captured is actually usable, not just spectacular.

For shots where the exact same camera move needs to be repeated multiple times — shooting an actor pass, then an empty background pass, then a practical effects pass — a motion control rig records the camera move mechanically and reproduces it identically on each pass. Without motion control, you're trying to match passes by hand in post, which is solvable but time-consuming. For certain composite shots it's the only clean solution.

As mentioned previously, motion control is a mechanized camera system where every movement of the camera — pan, tilt, dolly, crane, focus pull — is recorded as a precise numerical sequence and reproduced by motors exactly on command. The camera doesn't move by hand. It moves by program. Most composite shots are built from multiple passes — separate elements shot at different times that need to be combined into a single image. An actor against a green screen. The same frame without the actor, just the empty set. A separately shot practical explosion. A pass of smoke elements. For all of these to sit together in the final frame without slipping or drifting, the camera has to be in exactly the same position, moving at exactly the same speed, following exactly the same path on every single pass.

A human camera operator cannot do this with the precision compositing requires. A motion control rig can.The setup process is straightforward. You shoot the first pass — usually the hero pass with the actor — and the rig records every motor position at every frame. That data gets saved. Every subsequent pass replays the same data. The camera follows the identical path to within fractions of a millimeter. When the compositor stacks the layers, everything lines up.Beyond multi-pass compositing, motion control gets used for in-camera effects that require repeatable timing — a camera that needs to accelerate and decelerate at a precise rate, a complex programmed move that couldn't be executed consistently by hand, or slit-scan and streak photography where exact mechanical repeatability defines the visual effect itself. As also mentioned previously, some of the most iconic shots in film history — the Star Wars original trilogy's space sequences, the stargate sequence in 2001 — were built on motion control rigs because no other method existed to composite that kind of photography.

Programming a motion control move takes longer than blocking a conventional camera setup. Executing each pass takes longer. Troubleshooting motor errors or data sync issues takes longer. On a production where every shooting day costs hundreds of thousands of dollars, the decision to use motion control has to be justified against what it would cost to solve the same problem in post without it. For certain shots there is no viable post solution and motion control is the only answer. For others it's a convenience that post could work around at acceptable cost. The VFX supervisor and the director make that call shot by shot.

A greenscreen is a controlled photographic problem that, when set up correctly, gives compositors a clean separation between the subject and the background. When set up badly, it creates work that cascades through post for months. Everything that goes wrong on the greenscreen stage shows up later in roto, in keying, in color matching, and in edge detail. The mistakes compound. Green is used because it is the color furthest from human skin tone in the visible spectrum, which means pulling a key — isolating the subject from the background — creates the least contamination of the actor's color information. Blue is the alternative, and gets used when a costume or a prop is green, or when shooting film rather than digital where blue separates more cleanly from the grain structure.

Even lighting across the screen surface is the first requirement and the one most often neglected under schedule pressure. The screen needs to be lit as uniformly as possible — the same brightness from edge to edge, top to bottom. Hot spots, where one area is significantly brighter than another, create inconsistencies in the key. A keying algorithm works by selecting a range of color values and making them transparent. If the screen varies in value across its surface, the algorithm either clips out too little — leaving greenscreen residue around the subject — or too much, eating into the subject's edges. Fixing a badly lit greenscreen in post is not a matter of pressing a button. It is frame-by-frame remediation.

Screen distance is the second variable. The actor needs to stand far enough from the screen that the green light reflecting off the screen surface doesn't contaminate their wardrobe, hair, and skin. This contamination is called spill — green color cast that wraps around the subject's edges and sometimes reaches into their face and clothes. Some spill is almost unavoidable and compositors have tools to suppress it. But heavy spill from an actor standing too close to the screen creates semi-transparent green color casts that are extremely difficult to correct without degrading the image quality around the edges of the subject. The rule of thumb is to maintain at least the width of the screen as separation distance. On stages with limited space this gets compromised, and the VFX supervisor has to make a judgment call about how much spill is acceptable versus what the shot requires.

Wrinkles and seams in the screen surface create shadow variations that read as color inconsistency to the keyer. A screen that is tight, flat, and evenly tensioned keys cleanly. A screen with physical texture keys badly. For small-scale setups this gets solved by using seamless painted cycloramas — curved painted walls — instead of fabric screens. For large-scale production shoots with significant camera movement, the screen has to be large enough that the edges never enter the frame regardless of how the camera moves, and tensioned tight enough to eliminate surface variation.

Wardrobe and prop conflicts have to be resolved before the shoot day. Anything on the actor that is close in color to the screen — a green jacket, a khaki costume, a translucent fabric — will key out along with the screen. The costume designer, the VFX supervisor, and the art department need to have that conversation in pre-production, not on the day. If a costume element has to be green for story reasons, the screen switches to blue. If neither is workable, the shot needs a different approach — a clean plate composite, a roto solution, or a practical set element.

Frame line coverage is something the VFX supervisor checks before any take. The greenscreen needs to fill the entire background of the frame for every position the actor moves to, every lens the DP plans to use on that setup, and every angle of the planned camera movement. A screen that fills the frame on the primary lens but clips on a wider lens wastes takes. This gets checked with a monitor at the camera position before the crew is set.

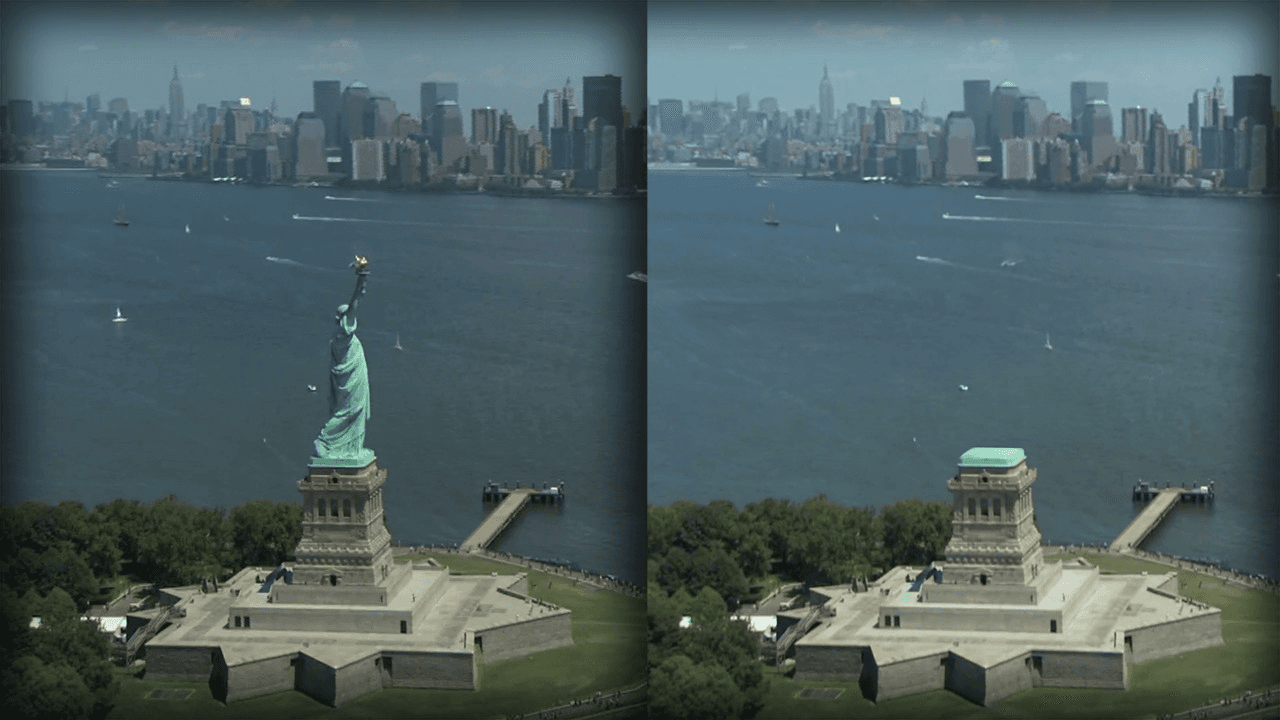

Quality control during the shoot means the VFX supervisor is watching every take on a dedicated monitor looking specifically at the screen and the edges of the subject, not the performance. The director watches the performance. The VFX supervisor watches the key. After a take that will be used, they may call for the camera to hold position and shoot a reference pass — a few seconds of the empty screen at the same exposure — that gives the keying artist an isolated sample of the screen color. This makes the keying process faster and cleaner in post. The quality of the screen itself matters as well, it needs to stay in the same color tone indoors and outdoors, rain or shine, over the period of the shoot. I've personally had to pull an experiment to place different screens side by side on the top of roof, to see which brand stays the most consistent to convince production to approve the purchase for the more expensive one. Let's talk about the job of a VFX producer next. 🫶🏼