Created on

Updated on

The Case for the Computational Universe: Evidence, Glitches, and Logic

Preface: Co-written with Gemini.

The hypothesis that the universe is not just like a computer but is fundamentally a mathematical information processor has moved from the fringes of science fiction into the heart of theoretical physics. By examining the structural evidence and the logical hurdles of this model, we can begin to see a reality that is far more digital than we ever imagined. The first and perhaps most striking piece of evidence is the existence of universal constants. In physics, values like the speed of light (c), the gravitational constant (G), and Planck’s constant (h) appear as fine-tuned numbers that never change. In a traditional mechanical universe, these are just lucky accidents. However, in the "Universe as a Processor" model, these are clearly hard-coded global variables. Just as a software developer defines the maximum speed of a character or the strength of gravity in a game engine to ensure the simulation remains stable, these constants set the boundary conditions for our reality. If the speed of light were even slightly different, the program would crash—atoms would not form, and stars would not ignite.

Then there is the mystery of Quantum Entanglement. Albert Einstein famously called this "spooky action at a distance." When two particles are entangled, a change in one instantly affects the other, even if they are separated by light-years. In a three-dimensional physical space, this violates the speed limit for information transfer. But on a computer, this is trivial. Two objects on a screen may appear miles apart, but if they both reference the same memory address, a change to the underlying data updates both pixels simultaneously. Entanglement suggests that our 3D space is merely a front-end rendering, while the back-end logic operates on a non-local data structure where distance is irrelevant.

Let’s move on to the next mystery we have, the Planck Scale. Classical physics assumed that space and time were infinitely smooth and continuous. However, quantum mechanics reveals that there is a minimum length (the Planck length) and a minimum time (the Planck time). This is the graininess of reality. It mirrors the architecture of our own technology perfectly: the Planck length acts as the pixel resolution of the spatial grid, and the Planck time acts as the system clock frequency. If you try to calculate anything between these units, the universe simply returns a null value. There is no such thing as half a pixel or a fraction of a clock cycle.

Despite the elegance of this model, it faces significant logical bugs. The most persistent question is: Who is the Programmer? A computer, by definition, is a tool created by an external agent to solve a problem. If the universe is a program, does that imply an external designer or an administrator providing the initial input? Some physicists argue that the universe could be a self-organizing system—a recursive program that wrote itself—but this enters the territory of bootstrapping paradoxes that are difficult to resolve without invoking a higher-order reality.

This leads to the hardware problem. Calculations cannot happen in a vacuum; they require a physical medium, like silicon chips or neurons, to store and flip bits. If the entire physical universe is the processor, what is the substrate it is running on? This creates a philosophical Matryoshka doll. If our universe is a simulation running on a computer in a higher dimension, what is that dimension running on? We find ourselves in an infinite loop of hardware requirements, struggling to find the base reality that supports the first machine.

We must ask: What is the output? In human computing, we run a program to get an answer—to calculate a weather forecast or render a frame of video. What is the cosmic calculation trying to solve? Some theorists suggest that the output is the emergence of complexity and consciousness. The universe may be a massive search algorithm designed to find every possible way that matter can become self-aware. In this view, we are not just observers of the calculation; we are the data points currently being processed to see what self-awareness looks like in this specific set of global variables.

The cosmic calculator hypothesis is arguably the most powerful model we have for unifying disparate fields of science. It provides a physical reason for the existence of entropy: as we learned from Landauer’s Principle, processing and erasing information generates heat. Therefore, the heat death of the universe is simply the inevitable thermal exhaust of a long-running calculation. It also explains why there is a universal speed limit. The speed of light isn't just an arbitrary number; it is the total bus speed of the system, the maximum rate at which information can be moved from one part of the grid to another.

In this light, every one of us—every action we take and every thought we think—is a subroutine running within a grand, integrated program. We are not separate from the universe; we are the self-diagnostic tools of the code itself. We are the part of the program that has gained enough complexity to stop and wonder about the hardware it is running on. As we continue to develop our own AI and quantum computers, we aren't just building tools. We are mimicking the very architecture that created us, slowly uncovering the source code of the reality we inhabit.

The idea that the universe’s fundamental constants are "hard-coded" is best demonstrated by the extreme sensitivity of the speed of light (c). In a computational sense, c is not just a speed; it is a scaling factor that determines the strength of the interactions between all matter and energy. If you were to adjust this single global variable, the "source code" of physics would fail to compile, leading to a total system crash across three critical layers of reality.

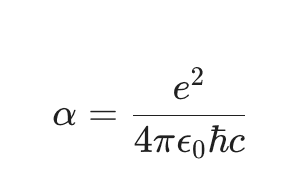

The stability of an atom depends on the balance between the attraction of electrons to the nucleus and the energy levels those electrons occupy. The Fine-Structure Constant (𝞪), which dictates the strength of the electromagnetic interaction, is inversely proportional to the speed of light (c). If c were significantly lower, the electromagnetic force would become too strong. Electrons would be pulled into the nucleus, or the "energy gaps" required for chemical bonding would shift so drastically that carbon, oxygen, and nitrogen could no longer form stable molecules. In this scenario, the "program" crashes at the BIOS (Basic Input/Output System) level: the basic building blocks of the simulation (atoms) simply fail to initialize.

Stars are the universe’s primary engines for creating complexity, but they require a very specific "ignition temperature" to overcome the electrical repulsion between protons—a process called nuclear fusion. This process is governed by Einstein’s most famous equation, E = mc^2. Because c is squared in this equation, it acts as a massive multiplier for how much energy is released when matter is converted. If c were smaller, the energy yield from fusion would drop exponentially. Stars would lack the outward pressure necessary to prevent gravitational collapse, or they would never get hot enough to fuse elements at all. Without the "Output" of stars, the universe would remain a dark, cold cloud of hydrogen, lacking the heavy elements (like iron and gold) that require a functioning stellar "processor" to be synthesized.

In our universe, the speed of light is the Maximum Processing Speed—the rate at which cause and effect can propagate. If c were different, the very structure of Spacetime (the "operating system") would warp. If c were infinite, every event in the universe would happen everywhere simultaneously, destroying the concept of "before" and "after." If c were much slower, the "latency" of the universe would be so high that gravity could not hold galaxies together effectively, and the expansion of the universe would likely tear the simulation apart before the first stars could even form. The precise value of c ensures that the "frames" of the universe are rendered at exactly the right speed to allow for stable, long-term evolution.

In the analogy of the Universe as a Computer, comparing the failure of atomic formation to a BIOS level crash is particularly accurate because the BIOS (Basic Input/Output System) is the very first thing that must "wake up" and function before an operating system (like Windows or macOS) can even begin to load. If the fundamental constants like the speed of light (c) or the charge of an electron were even slightly off, the "hardware" of the universe would fail to initialize its most basic components. When you turn on a computer, the BIOS performs a "POST" to ensure the RAM, CPU, and hard drive are present and working. In the early universe, the "POST" phase was the transition from a hot plasma of subatomic particles to the formation of the first stable atoms (Recombination). If the electromagnetic force—which is tied to the speed of light—were too strong, electrons would be "captured" too violently or bound too tightly to the nucleus. The "handshake" between a proton and an electron would fail. Without stable atoms, the universe would remain a "frozen" screen of undifferentiated plasma. The system would hang before the "Operating System" (Chemistry and Biology) could ever boot up.

The BIOS provides the low-level instructions that tell the hardware how to interact. In physics, the Fine-Structure Constant (𝞪) acts as the universal driver for how light and matter interact.

As you can see, the speed of light (c) is literally built into the denominator of this constant. If you change c, you corrupt the "driver." Suddenly, the instructions for how a carbon atom should share an electron with an oxygen atom are unreadable. In a computer, a corrupted driver means your screen goes black; in the universe, it means the "Software" of Life cannot run because the "Hardware" of Chemistry is throwing a fatal error. Atoms are held together by a delicate balance of forces. If the "code" for these forces is slightly altered, the nucleus of an atom becomes unstable. With too much speed (c), the "binding energy" (E=mc^2) would shift, potentially making even simple elements like Carbon radioactive and prone to instant decay. Too little speed (c), the energy required to keep electrons in their orbits would vanish, causing them to spiral into the nucleus. This is a Kernel Panic at the scale of reality. It is the ultimate "point of no return." The core of the operating system (the Kernel) encounters an internal error it cannot handle or recover from. The entire system instantly halts to prevent further data corruption. You cannot "patch" a universe where atoms don't hold together. If the BIOS-level constants are wrong, the simulation ends before the first frame is even rendered. More on this in the next post. ☀️