Created on

Updated on

The Cosmic Code: Is the Universe a Giant Processor?

Preface: Co-written with Gemini

In 1867, James Clerk Maxwell sat in his study and imagined a tiny, neat-fingered being that could sort molecules. He wanted to pick a hole in the Second Law of Thermodynamics, suggesting that if one had enough information, they could create order from chaos without spending energy. Maxwell did not know it then, but his Demon would eventually lead us to a radical conclusion: information is not just an abstract thought; it is a physical entity. Today, as we stand on the shoulders of giants like Maxwell, Boltzmann, and Landauer, we are forced to ask a deeper question. If information is physical, and the laws of physics resemble algorithms, is the universe itself a giant processor?

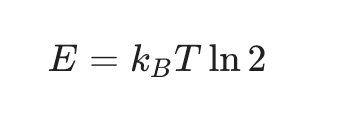

Maxwell’s Demon was the first information engine. By sorting fast, hot molecules from slow, cold ones, the Demon created a temperature gradient—a battery made of knowledge. For a century, this was a paradox because it seemed to offer a free lunch of energy. The resolution, provided by Rolf Landauer in 1961, was revolutionary. He proved that the Demon’s cost is not in its muscles, but in its memory. To keep sorting, the Demon must eventually erase its data to make room for more. Landauer showed that erasing one bit of information releases a specific amount of heat. This is the Landauer Limit, defined as

The universe demands a tax for forgetting. This single principle bridges the gap between the logic of a computer and the heat of a steam engine.

If we treat information as the fundamental currency of reality, the It from Bit hypothesis becomes hard to ignore. In this view, a particle is not a thing; it is a set of data points—mass, charge, and spin. When particles interact, they are not just bumping into each other like billiard balls; they are exchanging data. This computational architecture is supported by four pillars. First is Maxwell’s Demon, which acts as the logic gate. In a digital computer, a logic gate (like an AND or OR gate) takes input signals and decides whether to output a 1 or a 0 based on a rule. This is exactly what Maxwell’s Demon does at the molecular level. By observing a particle and deciding whether to open or close a door, the Demon acts as a conditional branching statement: IF (particle == fast) THEN (move to Room B). This is the birth of "Choice" in physics. Without this "gatekeeping" mechanism, the universe would be a soup of uniform chaos. By acting as a logic gate, the Demon allows the universe to create structure, separate heat from cold, and build the complex gradients necessary for life. It proves that the "if-then" logic we use in software is a fundamental capability of the physical world.

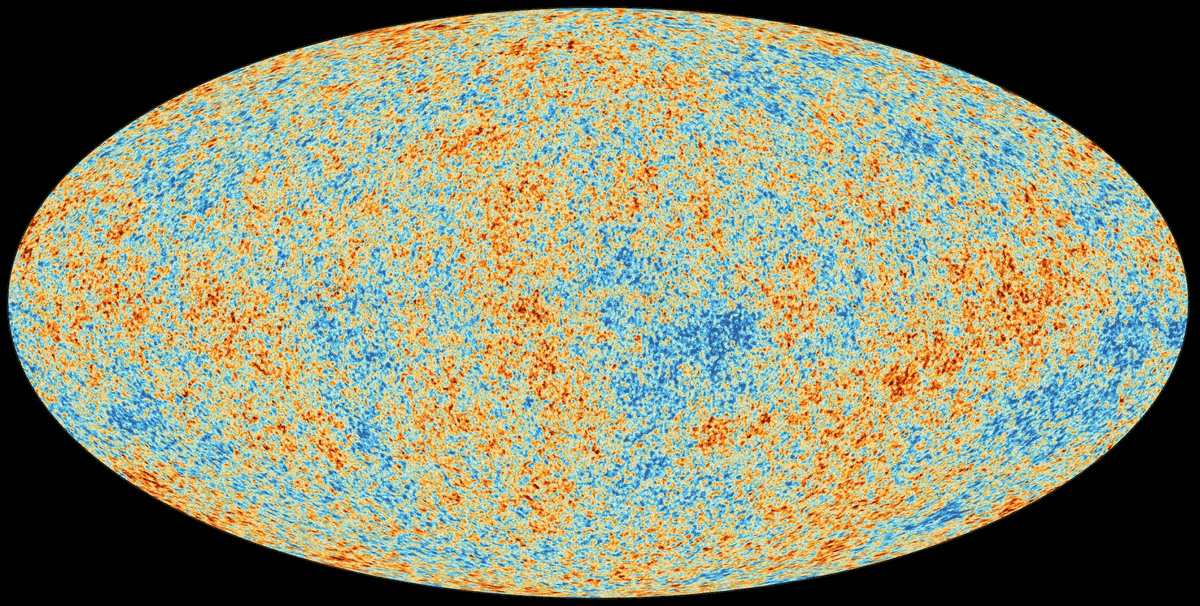

Second is the Boltzmann Distribution, which treats reality as a macro rendering of billions of micro probabilistic calculations. To understand the Boltzmann Distribution as a "Macro Rendering Engine," we have to look at the massive gap between what is actually happening at the atomic level and what we perceive as reality. In a computational sense, this is the difference between the raw data (the backend) and the User Interface (the frontend). If you could zoom in on a glass of water, you wouldn't see a smooth, still liquid. You would see trillions of molecules slamming into each other at thousands of miles per hour. This is a "noisy" environment. In a traditional "Billiard Ball" model of physics, calculating the future of this glass would require tracking every single collision—a computational task so immense it would crash any finite processor. Ludwig Boltzmann (and later Maxwell) realized that the universe doesn't need to track every individual molecule to maintain its laws. Instead, it uses Probability. The Boltzmann Distribution is a mathematical curve that describes the likelihood of a particle having a certain amount of energy at a given temperature. Instead of calculating 1 + 1 + 1 for every molecule, the universe "samples" the crowd. It effectively says: "In this system, 10% of molecules will be very fast, 80% will be average, and 10% will be slow." This distribution is so statistically reliable that it never fails. It turns trillions of unpredictable "micro-events" into a single, predictable "macro-state."

When this statistical calculation is finished, the universe "renders" the result into properties we can actually feel. These are called Emergent Properties. Temperature isn't a "thing" that exists; it is just the average kinetic energy of the crowd. It is the "color" the UI displays to represent how fast the molecules are moving. Pressure is just the statistical average of billions of tiny impacts against a surface. Entropy is a measure of how much "information" or "order" is hidden in the micro-states compared to what we see at the macro-level. In a high-end video game, the computer doesn't render every single leaf on a distant tree if you aren't looking closely; it uses a low-detail "proxy" to save processing power. The Boltzmann Distribution does the same for the laws of physics.

In computer graphics, this technique is known as LOD (Level of Detail). When you are standing right next to a tree in a game, the GPU renders the veins on every leaf. As you move away, the tree is replaced by a simpler 3D model, then a flat 2D "sprite," and finally just a few pixels. This saves immense processing power because the computer only calculates the "resolution" necessary for the observer's perspective. The Boltzmann Distribution is the universe’s version of LOD. It allows the laws of physics to function at a massive scale without needing to resolve the impossible complexity of trillions of individual "micro-states." If you look at a digital photo of a sunset, you see a smooth gradient of orange and red. But if you zoom in deep enough, the gradient disappears, and you are left with square, disjointed pixels. The pixels have no "beauty" or "sunset-ness"; they are just data points.

In physics, the Micro-state is the pixel. It is one specific molecule at one specific coordinate with one specific velocity. On its own, a single molecule has no "temperature" or "pressure"—those concepts don't exist at that resolution. The Boltzmann Distribution "zooms out" and averages those pixels to create the Macro-state. It renders the "image" (Heat) so the universe doesn't have to constantly manage the "pixels" (Molecules). Imagine if the universe had to solve F=ma for every one of the 10^(23) molecules in a liter of air every nanosecond just to figure out how a balloon should expand. The "computational overhead" would be so high that the universe would likely "lag" or crash.Instead, the Boltzmann Distribution provides a shortcut. By using the lopsided bell curve of molecular speeds, the universe can predict the behavior of the entire gas with 99.99999999% accuracy using just a few variables. It uses "statistical sufficiency"—it knows that in a large enough crowd, the outliers cancel each other out.

In computer science and statistics, Statistical Sufficiency is the idea that you don't need every scrap of raw data to understand a system; you only need a specific "summary" that captures all the relevant information. In the "Universe as a Processor" model, the Boltzmann Distribution uses this principle to perform a massive data compression. It realizes that when you have 10^{23} particles, the "noise" of individual outliers doesn't just fade—it mathematically vanishes into the average. In a small group—say, five molecules—one "outlier" (a particle moving at extreme speed) can change the average temperature of the group by 20%. This is high-variance, "unstable" code. If our world worked this way, your cup of water might spontaneously boil or freeze because of a few rogue particles. However, the universe scales this up to a "Large Crowd." When you have billions of particles, for every molecule that is "freakishly fast," there is almost certainly another one that is "freakishly slow." These outliers "cancel each other out" during the summation process.

To prove that outliers "cancel each other out," we have to look at the mathematics of aggregation and the physical reality of how particles interact. In a large system, the behavior of the "average" becomes incredibly stable because the random "noise" of individual particles undergoes a process of statistical smoothing. One of the strongest mathematical proofs for this is the Law of Large Numbers. Imagine you are flipping a coin. If you flip it only ten times, you might get eight heads, which is an 80% outlier. In such a small sample, a few random "fast" or "slow" events dominate the result. However, if you flip that same coin ten thousand times, the probability of maintaining an 80% heads ratio becomes virtually zero. This is because for every streak of heads, there is a statistical probability of a matching streak of tails. When you sum them together into an average, the deviations from the mean shrink relative to the total size of the system. In a box of gas containing 10^{23} particles, the outliers are still present, but they become mathematically invisible to the final average.

We can also find a visual proof in the transition from "Static" to a solid "Image." Think of an old analog television set that isn't tuned to a station. You see "snow" or white noise, where each black or white dot represents a micro-state or an outlier. If you look at a single pixel, it is jumping wildly between black and white, making it fundamentally unstable. But if you step back and look at the whole screen, you see a uniform, solid gray. That gray is the Macro-state. The white pixels (fast outliers) and black pixels (slow outliers) are mixed so thoroughly that their individual extreme values cancel each other out at the resolution of your eye. The universe treats gas molecules in the same way; the "gray" we see and feel is what we call Temperature.

In the physical world, the proof that outliers cancel out is the reason water doesn't spontaneously explode. In a glass of lukewarm water, there are always molecules moving fast enough to be steam and others moving slow enough to be ice. If these fast outliers were to cluster together even for a millisecond by pure chance, a tiny pocket of water would spontaneously boil and burst. This doesn't happen because of spatial and temporal cancellation. At any given moment, for every fast molecule moving in one direction, there is almost certainly a fast molecule moving in the opposite direction. Their net momentum on a container wall averages out to a steady, non-fluctuating Pressure. Furthermore, when a fast molecule hits a slow one, they exchange energy, redistributing the extreme "outlier" energy back toward the mean. It allows the universe to maintain stable laws (like the Gas Laws or Thermodynamics) without having to be "aware" of every single atom. It creates the illusion of a solid, stable world out of a reality that is actually a buzzing, chaotic cloud of probabilities.

A modern video game doesn't track every individual photon of light; instead, it uses shaders and rendering engines to create a smooth visual surface based on statistical approximations. The Boltzmann Distribution is the universe’s Rendering Engine. On a microscopic level, trillions of gas molecules are crashing into each other with chaotic, unpredictable speeds. It is a "noisy" data set. However, the Boltzmann Distribution applies a statistical "filter" over this noise. It calculates the most probable state of the system, which emerges to us as Temperature and Pressure. When you feel the warmth of the sun, you aren't feeling individual particles; you are experiencing the "Macro UI" of a massive, probabilistic calculation. Reality is a low-resolution interface of a high-resolution microscopic calculation.

Third piece to this puzzle is Landauer’s Principle, representing the power consumption of the system. No computer can run without a power supply, and every calculation generates waste heat. Landauer’s Principle represents the Physical Cost of Computation. In our universe, information is never free. Every time a physical process becomes "irreversible"—meaning information is lost or a state is reset—the universe "bills" the system by releasing heat. This is the reason the universe has Entropy. If we could calculate forever without losing information, the universe would never "heat up" and eventually die. But because the Landauer Limit exists (E = k_B T ln 2), every "operation" performed by stars, planets, and even our own brains adds to the total heat of the system. This heat is the exhaust of the cosmic processor, proving that the universe is actively "working" and consuming energy to evolve its state.

Finally, there is Quantum Entanglement, which functions like a shared memory address, allowing instantaneous synchronization regardless of distance. One of the hardest things to explain in "Billiard Ball Physics" is how two particles can stay perfectly synchronized across light-years. In a traditional network, a signal must travel from point A to point B, which takes time. However, in a computer, two different variables can point to the same Memory Address (a pointer). Quantum Entanglement suggests that the universe has a "Back-End" where distance does not exist. If two particles are entangled, they are essentially the same "Object" in the cosmic code. When you "write" a change to particle A, particle B updates instantly because they aren't two separate files; they are two different "shortcuts" on the desktop leading to the exact same data on the hard drive. This provides the universe with Instantaneous Synchronization, allowing the cosmic program to maintain consistency across its massive "distributed system."

When combined, these principles create a complete machine. Maxwell’s Demon provides the logic, Boltzmann renders the interface, Landauer manages the power and heat, and Entanglement handles the high-speed data bus. This isn't just a metaphor—it is a description of how the universe maintains order, processes change, and manages resources. More on this in the next post.☀️