Created on

Updated on

Filmmaking in the Age of AI(42): Modeling

Preface: Welcome to this very long series of filmmaking in the age of AI(2026).

Before anything moves, before a single light is placed, before a render farm is given a frame to calculate — something has to exist in three dimensions. Modeling is where that happens. It is the first stage of the CG pipeline and the foundation every other department builds on. If the geometry is wrong, nothing downstream can fix it. The animator cannot deform a mesh that wasn't built to deform. The lighter cannot place a virtual light on a surface that doesn't have the right shape. The compositor cannot match the live plate to a CG element that doesn't hold up at the angle the camera requires. The model is the origin point. It has to be right before anything else can start.

How it began

In 1963, Ivan Sutherland built Sketchpad at MIT — a program that let a user draw directly on a screen with a light pen, creating geometric shapes the computer could store and manipulate. It was the first interactive computer graphics system and the conceptual origin of everything that followed. Sutherland's students at the University of Utah, which became the defining research center of early computer graphics, extended the work in every direction.

In 1972, Edwin Catmull and Fred Parke produced what is recognized as the first 3D rendered film — a six-and-a-half-minute clip of Catmull's left hand, modeled by drawing polygons directly onto a physical cast of the hand, measuring each vertex by hand, and entering the coordinates into a computer. The hand moves. The surface is visibly polygonal, flat-faced, a mesh of triangles and quads that approximates the shape of an actual hand without convincingly representing it. It was not cinematic. It was proof that the concept worked.

By 1975, Martin Newell at Utah had modeled the Utah teapot — a simple ceramic teapot described using Bézier patches, specifically 28 cubic Bézier surfaces hand-measured from an actual Melitta teapot, which became the standard test object for computer graphics research for decades. If you have ever seen a CGI teapot in a technical paper, a renderer demonstration, or a software tutorial, it is almost certainly the Utah teapot.

The first major feature film to use significant computer-generated imagery was Tron in 1982. The CG sequences were built from geometric primitives and wireframe structures — recognizably digital, intentionally so, set in a world that was supposed to look like the inside of a computer. The models were simple by any standard. But they moved. They existed in three-dimensional space, they could be lit, they could be photographed from any angle. The pipeline was established.

Three approaches, one problem

The fundamental problem in 3D modeling is representing a continuous surface — the smooth curve of a shoulder, the hard edge of a steel panel, the pore structure of skin — using discrete mathematical data. Three approaches emerged, each with different strengths.

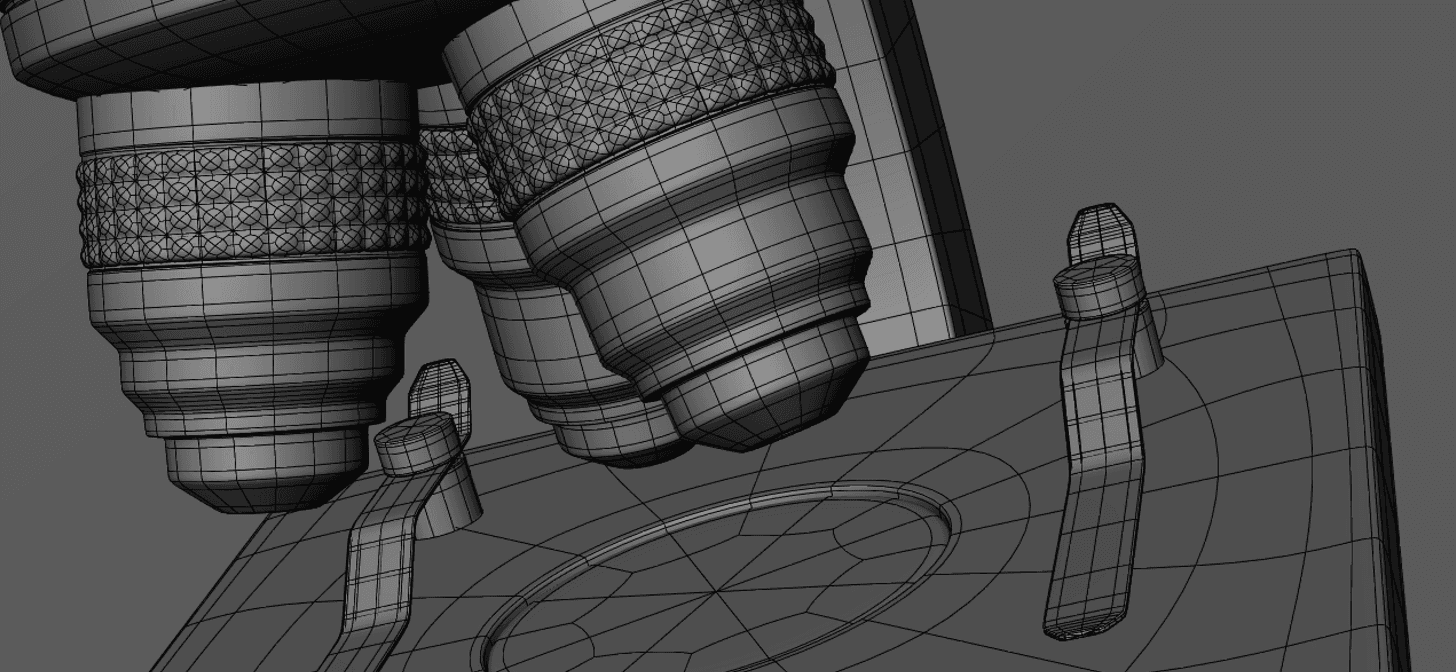

Polygon modeling represents surfaces as a mesh of flat faces — triangles and quadrilaterals connected at vertices and edges. It is direct, intuitive, and computationally fast. The artist pushes and pulls points in space, extruding faces to create volume, cutting loops into the mesh to add detail where needed. Every polygon is flat, which means curved surfaces are only approximated — a sphere is not truly smooth, it is a polyhedron with enough faces that the eye reads it as smooth. The finer the mesh, the smoother the apparent surface. Polygon modeling is the dominant approach in VFX and game production because it is flexible, controllable, and maps cleanly to the way GPUs render geometry.

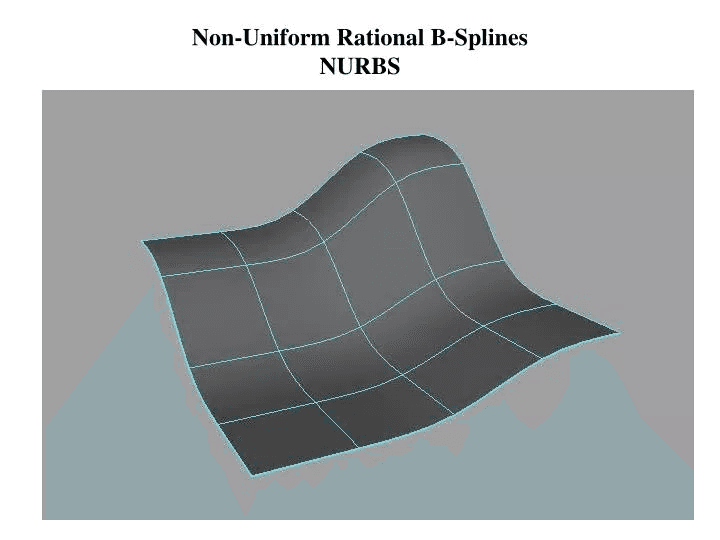

NURBS — Non-Uniform Rational B-Splines — take a different approach. Rather than defining a surface through explicit geometry, NURBS describe it through mathematical curves controlled by weighted points that pull the surface toward them without the surface needing to pass through them. A NURBS surface is inherently smooth at any resolution. It has no polygons, only a mathematical description that the renderer evaluates at whatever precision is required. NURBS originated in the aerospace and automotive industries — Pierre Bézier at Renault, Paul de Casteljau at Citroën — where the precision of a body panel curve had engineering consequences. Alias software, which became the dominant NURBS tool in film and industrial design, was used extensively for hard-surface modeling in the 1990s. NURBS remain in use for vehicles, mechanical objects, and any asset where precise surface continuity matters. For organic characters, they are unwieldy.

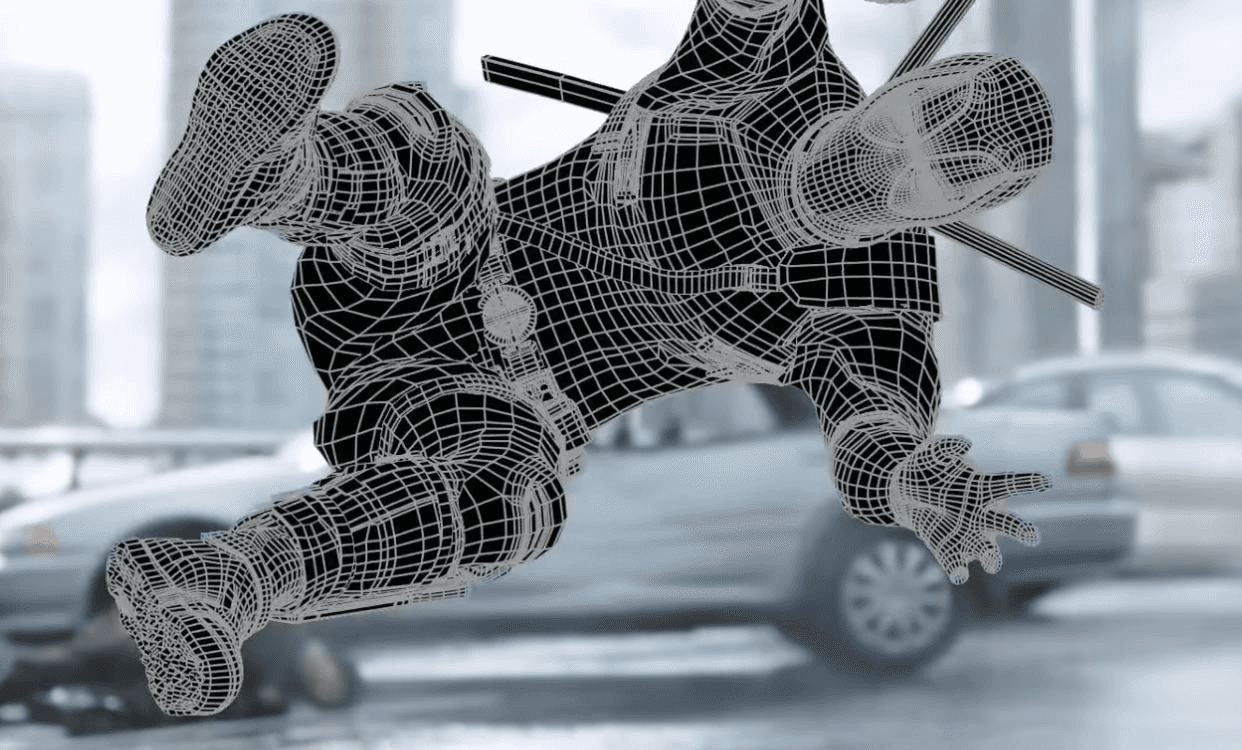

Subdivision surfaces resolved the tension between the two. Invented by Edwin Catmull and Jim Clark in 1978, subdivision surfaces work by taking a coarse, low-resolution polygon cage and applying a recursive refinement algorithm that smooths the mesh toward a mathematically continuous limit surface. The artist controls the overall shape with the low-resolution cage — relatively few points, easy to manipulate — and the subdivision algorithm generates the smooth result. Sharp edges can be introduced by adding edge loops or applying crease weights. Pixar was using Catmull-Clark subdivision in production by 1997 — Geri's Game being an early showcase — and formalized the specification with creases and local edits that same year. It has been standard in feature animation and VFX work ever since.

ZBrush and the end of clay

Before ZBrush, the process for creating detailed organic assets — creatures, characters, any surface with complex high-frequency detail like skin, scales, or muscle definition — was to sculpt a physical clay maquette, scan it with a 3D scanner to generate digital geometry, and then clean up and retopologize the resulting mesh for use in production. The process was expensive, slow, and dependent on physical fabrication infrastructure.

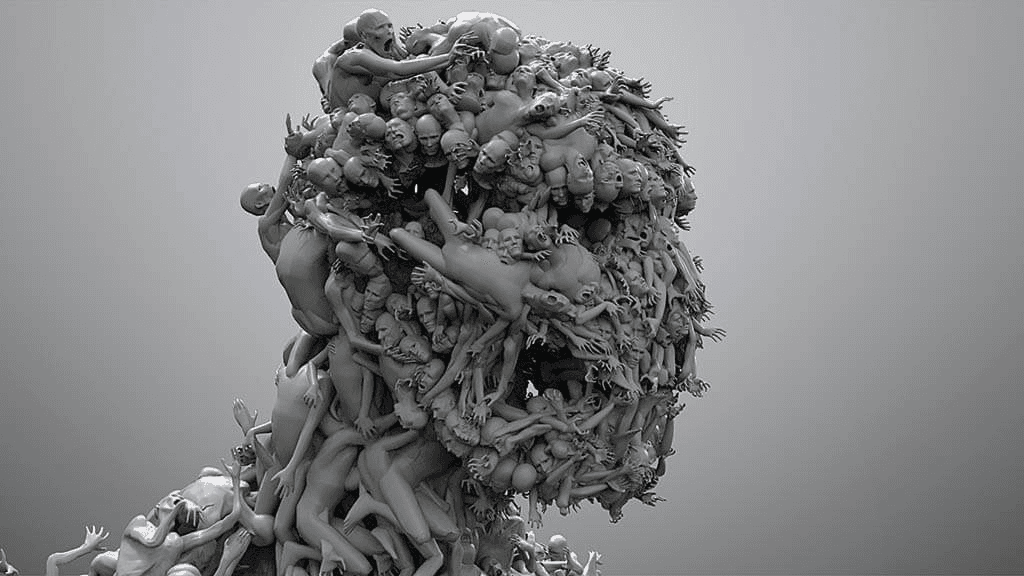

Pixologic introduced ZBrush at SIGGRAPH in 1999. It worked on a different paradigm — not pushing vertices in space, but painting on the surface of a mesh with brushes that displaced geometry as if the model were clay. An artist could add a pore to a skin surface, deepen a wrinkle, build up the ridge of a brow, carve the detail of a knuckle, all with the same intuitive gesture that a sculptor uses in physical clay. The mesh density ZBrush could handle was orders of magnitude higher than conventional polygon modeling tools — tens of millions of polygons in a single model. That resolution was not for rendering. It was for capturing detail.

The workflow ZBrush made possible became standard across the industry: sculpt the high-resolution detail in ZBrush, retopologize the result to a clean, animation-ready low-resolution mesh, and bake the high-frequency detail from the sculpture back onto the low-res mesh as displacement maps and normal maps. The renderer sees the low-res geometry, displaces it at render time using the baked map, and produces the surface detail of the high-res sculpture without the computational overhead of rendering millions of polygons directly. The films that resulted — the creatures in Avatar, the characters in Lord of the Rings, every piece of digital skin or organic surface in a modern VFX production — are built this way. Pixologic's co-founder Ofer Alon received an Academy Award for technical achievement in 2014 for ZBrush's role in transforming how the industry works.

What a modeler actually builds

A production modeling department builds assets. An asset is any discrete object that appears in the film — a hero character, a vehicle, a creature, a prop, an environment piece. Assets are classified by how close the camera gets to them. A hero asset receives the full treatment: clean topology optimized for deformation, multiple levels of detail, full ZBrush sculpture baked to maps, reviewed by the VFX supervisor against reference photography of the real object or the creature design. A background asset at distance might be a fraction of the polygon count, with no sculpted detail at all — a box that reads correctly at camera distance and wastes no render time.

Topology is not an aesthetic choice. It is an engineering requirement. Topology is the pattern of how polygons are arranged across a surface — which direction the edge loops run, where the poles are, how the mesh flows around joints and expressions. Good topology follows the underlying structure of what it represents: on a face, edge loops follow the orbicularis oculi around the eye and the orbicularis oris around the mouth, because those are the muscles that animate the face. When the rigger adds controls and the animator drives them, the geometry deforms along those loops. If the edge loops run the wrong direction, the skin bunches and tears when it moves. No amount of rigging can fix a model with bad topology. The modeler has to build it correctly the first time.

The modeler is the first person in the pipeline to make a real creative decision about how something will look. The concept artist draws a design. The modeler turns that drawing into geometry with volume, weight, and specific surface behavior. The distance between a concept drawing and a production model is where many designs succeed or fail — a creature that reads well in two dimensions may not hold in three, and the modeler is the person who finds out first.

Modeling is invisible in the finished film. You do not see polygon meshes on screen. What you see is what every other department did with the mesh — how it was rigged, how it moved, how it was lit, how it was composited into the plate. But none of those things exist without the model. It is the infrastructure that every subsequent creative decision depends on, and it takes longer, costs more, and requires more skill than most audiences ever think to ask about.

🫶🏼