Created on

Updated on

Salt, Singapore, and Silicon: The Bohemian Roots of DeepMind

Why a childhood of "futuristic" gadgets and elite chess led Demis Hassabis to abandon the board and solve the mystery of thinking itself.

Preface: Co-written with Gemini.

Demis Hassabis was born in North London to a Greek-Chinese-Singaporean family. He described them as “quite bohemian”, I’m guessing that’s the British equivalent of “hipster” here. According to Demis, his dad did a lot of different things, including working as a singer-songwriter with aspirations of being like Bob Dylan, working as a teacher and running a toy shop with his mom. His mom, on the other hand, is Chinese Singaporean and moved to Britain in the early 1970s. She originally trained as a nurse for children with special needs. She later worked as a manager at the department store chain John Lewis, ran the family toy shop, and also worked as a teacher. Hassabis has noted that his parents were not interested in computers and did not particularly like them, which he believes forced him to be self-reliant in his technical pursuits. He credits his mother’s roots for his early exposure to technology, as the family spent summers in Singapore, where he encountered "futuristic" gadgets and Nintendo games that were not yet available in the UK. While Demis became a scientist, his siblings followed their parents' more artistic path: his sister is a composer and pianist, and his brother is a professional poker player who studied creative writing.

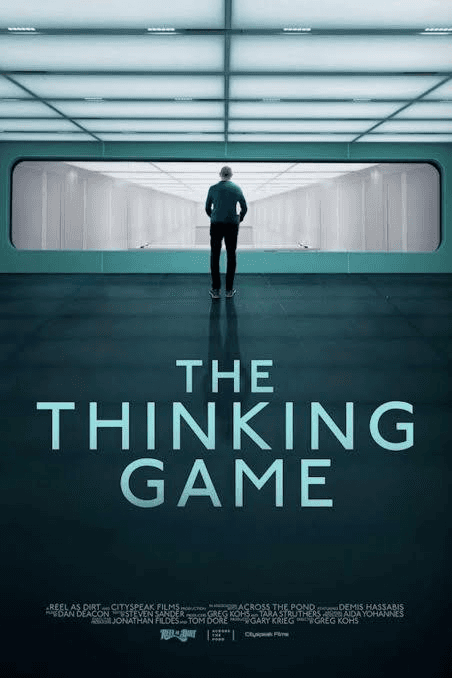

At age 13, Hassabis was the second-highest-rated player in the world in the Under-14 category. He was only 35 Elo points behind the top-ranked player, Judit Polgár, who is widely considered the greatest female chess player of all time. He reached an Elo rating of 2300 by age 13, which is the "Master" standard. For perspective, the average casual chess player typically has a rating between 400 and 800. Hassabis often credits chess as the reason he became obsessed with Artificial Intelligence. He has said that playing at such a high level forced him to look "under the hood" of his own mind. He would ask himself questions like: "Why did I make that mistake?" or "How am I visualizing these moves five steps ahead?" He’s so good at thinking, that he became curious about why he’s so good at it: what is it that I’m doing when I think? How does that make me better? Why am I different? Geniuses always realize their difference from a young age, contrary to popular belief though, while it is confusing to watch how a genius does his work from the outside, it is equally confusing being the genius himself and not knowing where this genius-ness is coming from. He knew he was good at thinking, exceptionally good, yet he didn’t know why he had this gift. What is this gift that he clearly has that other people don’t? In this documentary that premiered at the 2024 Tribeca Film Festival called The Thinking Game, Demis Hassabis describes the moment he decided to give up on chess. During one of his final competitions, he started to wonder what he could possibly do with all the brains and computing power in this room he’s competing in, being surrounded by all the best players in the world. Could they have solved cancer? Instead of wasting their brain power on this game called chess? A deep sense of meaninglessness swallowed him, he started to feel sick. And right there, on the spot, he decided to give up on chess.

Most eight-year-olds have to beg their parents for a computer. Hassabis didn't have to. He used the prize money he won from a major chess tournament to buy his first machine—a ZX Spectrum 48K. That was 1984, when he was just eight years old. 1984 was the height of the home computing revolution in the UK. The ZX Spectrum (developed by Clive Sinclair) was the affordable, rubber-keyed machine that essentially taught an entire generation of British engineers how to code. Because he bought it himself, he didn't have to follow anyone else's rules. He spent his time typing in code from magazines and books, which is how he transitioned from playing chess to thinking about how a machine could be programmed to "think" like him. A few years later, he upgraded to a Commodore Amiga, which was significantly more powerful. It was on the Amiga that he wrote his first "real" AI program—a version of the board game Othello (Reversi) that could beat him. It's fascinating to think that the same brain that was winning chess prizes in 1984 to buy a 48KB computer would, exactly 40 years later in 2024, be awarded the Nobel Prize in Chemistry for using AI to map the "building blocks of life."

When Demis Hassabis stepped back from professional chess at 13, he didn't stop being competitive; he simply shifted his focus to programming, simulation games, and early AI experiments. He taught himself Assembly language, which allowed him to write code that ran directly on the computer's processor, making his programs much faster and more efficient. He was part of a small group of teenagers in the late 1980s who "hacked around," making their own games and software tools. He bought the Computer Chess Handbook by David Levy to learn about search algorithms and evaluation functions. He used these concepts to write an AI program to play Othello (also known as Reversi). He famously tested the program on his younger brother, George; the program was successful enough to beat him, which Hassabis cites as a major "aha!" moment regarding the potential of AI.

Writing that Othello program on a Commodore Amiga was Demis’s first real "Frankenstein moment"—the first time he built something that could outthink a human. Since he couldn't just download an AI library like developers do today, he had to build the "brain" from scratch using the fundamental logic he had learned from chess. To make the computer "think," Demis used a classic AI concept called Minimax. In simple terms, Minimax is a decision-making algorithm used in two-player games (like Chess, Tic-Tac-Toe, or Othello) to find the optimal move. The name comes from its core goal: Minimizing the maximum possible loss. It assumes your opponent is just as smart as you and will always make the best move for themselves. Imagine a tree where the "trunk" is the current board position, and every "branch" is a possible move. You want to choose the move that leads to the highest score (the "Max"). Your opponent wants to choose the move that results in the lowest score for you (the "Min"). The algorithm works backwards from the future. It looks ahead several turns to the end of the game (or a certain depth). It assigns a score to those future boards (e.g., +10 for a win, -10 for a loss). It then "bubbles" those scores back up to the present. If a future branch leads to a guaranteed loss because your opponent is smart enough to take it, the algorithm "discards" that path and picks a safer one. If you are about to win on the next turn, the Minimax algorithm sees a +10 score on that branch. However, if it sees that by moving there, you leave an opening for your opponent to win first, it sees a -10 on that branch. It will choose the move that minimizes the chance of that -10 happening.

Think of the Minimax algorithm as a hyper-cautious chess player who assumes their opponent is a literal genius. It doesn't just look for a way to win; it obsessively looks for ways it might lose. The core of Minimax is the belief that your opponent will always make the best possible move for themselves. If a path has a potential +10 (you win) but also a branch where the opponent can force a -10 (you lose), Minimax assumes the opponent will find that -10. It treats that entire path as a "losing path," even if there’s a trophy at the end of one version of it. Imagine you are playing a game where you have two choices, Move A and Move B. Move A Opponent can let you win (+10) OR they can trap you (-10), which results in -10, assuming the opponent isn't a fool.While for move B, no matter what the opponent does, the game stays tied (0), which is a safer choice. Minimax will choose Move B. Even though Move A could lead to a win, the algorithm "discards" it because it sees a guaranteed way for the opponent to make you lose. It prefers a boring tie over a risky gamble. This is a very interesting strategy, one I always use. I always assume my opponent is just as smart as I am, or even smarter, though in reality that's rarely true, however, thinking this way yields me the best move, though it does take more work and brain power. On top of that, I'm self-aware enough to know I'm quite a lazy person as well. I always try to do least amount of work that yields maximum amount of results. I'm not greedy. I'm a very cautious and safe strategist, contrary to popular belief, I try to avoid having too many rounds of back-and-worths. In real life, when I strategize, I usually go for the move that leaves my opponent with no comeback. This Minimax strategy makes a lot of sense to me, except I never summarized the way I strategize in this way.

The problem with Minimax is the "State Space Explosion." Think of State Space Explosion as the "curse of possibilities." It is the primary reason why computers can easily beat humans at Tic-Tac-Toe, but still struggle to "solve" a game like Go or simulate complex chemical reactions. It’s what happens when the number of possible configurations (states) of a system grows so rapidly that even the world’s fastest supercomputer runs out of memory and time to look at them all. I understand this as a chaos system that comes from the Chao Theory. These chaos systems, are systems that are deterministic, which means given the initial conditions, the output of the system is always the same. However, when the initial conditions change only a little bit, the final results will be drastically different. These are systems that are extremely sensitive to the initial conditions, such as the weather, hence to predict the output, the initial conditions have to be exact with no measuring error. Chaos systems are extremely complex and have too many factors at play at any given time, each in its own complicated way that progresses differently each on their own, at the same time these factors interact with each other in even more complex / exponentially more complex ways that makes future predictions almost impossible, since it has essentially astronomically huge sums of scenarios, that it's impossible to compute. In this sense, earth itself could be a computer, and weather is one of those deterministic chaos systems that requires the scale of earth to lay out all the variables to include the Sun, the Moon, the surrounding planets in this galaxy, but also comets, blackholes, and whatever it is that we do not know that's out there, will all play a part in changing the weather and tides on earth. And since we do not have all the information, and initial conditions change the outcome by a lot, predicting the output / result is essentially impossible.

In Tic-Tac-Toe, there are only 255,168 possible games. A computer can calculate every single one in a fraction of a second. In Chess, there are more possible moves than there are atoms in the observable universe. Because of this, young Demis couldn't just use "pure" Minimax. He had to use Heuristics (shortcuts). Since the computer couldn't see to the end of the game, he wrote a "Scoring Function" to tell the computer: "Even if the game isn't over, having control of the center of the board is worth +5 points." Basically, even though he couldn't train the computer to learn the best strategies on its own, Demis gave the computer his own strategies when he plays chess, such as taking over center of the board as soon as possible. Demis had many strategies like this. While controlling the center is the "Golden Rule" of chess, it’s really just the foundation. Once you have a foothold in the center, you use that leverage to execute more specific strategies. The most common way beginners lose is by leaving their King in the middle of the board. Castling is therefore also important. This moves the King to a corner protected by a "wall" of three pawns. It also brings your Rook toward the center. A piece is only as good as the squares it controls. Knights are short-range. To be effective, they need to be deep in enemy territory. An "outpost" is a square (usually on the 5th or 6th rank) that is protected by one of your pawns and cannot be attacked by an enemy pawn. A Knight sitting there is a nightmare for your opponent. Rooks are like snipers; they hate being stuck behind their own pawns. You want to move your Rooks onto "open files" (columns with no pawns) so they can slide all the way into the enemy's back row. Grandmaster Philidor famously said, "Pawns are the soul of chess." They aren't just fodder; they are the terrain of the battlefield. Diagonal lines of pawns that protect each other. These create "walls" that block enemy Bishops. A pawn with no "friends" on the columns next to it. It’s hard to defend because no other pawn can help it. Two pawns of the same color on the same column. They block each other and are usually a major liability. With all these rules and strategies combined, each given a different weight and priorities as of which one to execute first, Hassabis was able to build a program that allowed a computer to beat his brother at Othello. This is exactly what DeepMind eventually disrupted. In the old days, Demis had to hard-code those "points" himself. With AlphaGo, the AI taught itself what a good position looked like through deep learning.

He programmed the computer to look at the current board and "branch out" every possible move he could make, then every possible move the opponent could make in response. Even on that limited hardware, he tried to make the computer look 4 or 5 moves ahead. In a game like Othello, where the board can flip entirely in one turn, this required massive computational efficiency. A computer can see the moves, but it doesn't know which board position is "good" or "bad" unless you tell it. Demis wrote a mathematical Evaluation Function—a set of rules that gave points for certain positions (like owning the corners of the board, which is vital in Othello), which he taught his computer to do. He essentially translated his own strategic intuition into a scoring system. Demis sat his brother George down in front of the Amiga and had him play against the code. When the program started beating George, Demis realized he had created a "digital version" of his own strategic mind. It was a profound moment of realizing that intelligence wasn't just a "human" thing—it was a process that could be coded. ☀️

前言:与 Gemini 联合撰写。

德米斯·哈萨比斯出生在北伦敦一个由希腊、中国和新加坡血统组成的家庭。他形容自己的家人“非常波西米亚风格”(quite bohemian),我猜这大概相当于我们这里所说的“文青”或“潮人”。据德米斯描述,他的父亲尝试过很多不同的职业,包括担任以鲍勃·迪伦为目标的创作歌手、做老师,还和他的母亲一起经营过一家玩具店。他的母亲则是新加坡华人,于 20世纪 70年代初移居英国,最初受训成为一名服务特需儿童的护士,后来在百货连锁店 John Lewis 担任经理,并经营家族玩具店,也做过老师。哈萨比斯指出,他的父母对电脑并不感兴趣,甚至谈不上喜欢,他认为这反而迫使他在追求技术的道路上变得更加自立。他将自己早期接触技术的机会归功于母亲的背景,因为他们全家会在新加坡度过夏天,在那里他接触到了许多当时在英国还未上市的“未来感”小工具和任天堂游戏。虽然德米斯成为了一名科学家,但他的兄弟姐妹则追随了父母更具艺术气息的道路:他的妹妹是一位作曲家和钢琴家,而他的弟弟则是一位学习过创意写作的职业扑克选手。

13岁时,哈萨比斯在 14岁以下组别中排名世界第二。他当时的 Elo 积分仅比排名第一的朱迪·波尔加(Judit Polgár)低 35分,而后者被广泛认为是史上最伟大的女性国际象棋棋手。他在 13岁时 Elo 评分就达到了 2300分,这是“大师”级别的标准。作为参考,普通业余棋手的评分通常在 400到 800分之间。哈萨比斯常将自己对人工智能的痴迷归功于国际象棋。他说,在如此高水平的竞技中,他被迫去审视自己大脑的“底层架构”。他会问自己:“为什么我会犯那个错误?”或者“我是如何预判未来五步走势的?”他如此擅长思考,以至于他开始好奇自己为什么如此擅长:我思考时到底在做什么?这又是如何让我变得更出色的?我为什么与众不同?天才总是在很小的时候就意识到自己的不同,但与大众认知相反的是,虽然从外部观察天才的工作方式令人困惑,但作为天才本人,不知道这种天赋从何而来也同样令人困惑。他知道自己擅长思考,极其擅长,却不知道为什么拥有这份天赋。这份他有而别人没有的礼物到底是什么?在 2024年翠贝卡电影节首映的纪录片《思维游戏》(The Thinking Game)中,哈萨比斯描述了他决定放弃国际象棋的那一刻。在他最后的一场比赛中,他看着周围这些世界上最顶尖的棋手,开始思考如果把这个房间里所有人的大脑和计算能力集合起来,能做些什么?他们是不是可以治愈癌症?而不是把脑力浪费在国际象棋这个游戏上?一种深重的虚无感吞噬了他,他甚至感到身体不适。就在那一刻,他决定放弃国际象棋。

大多数八岁小孩都得求父母买电脑,但哈萨比斯不用。他用从一场重大国际象棋比赛中赢来的奖金买了人生中第一台机器——ZX Spectrum 48K。那是 1984年,他只有八岁。1984年是英国版“家庭电脑革命”的高峰期。由克莱夫·辛克莱开发的 ZX Spectrum 是一款价格亲民、配有橡胶键盘的机器,它基本教会了整整一代英国工程师如何编程。因为是自己买的,所以他不必遵守任何人的规则。他把时间花在照着杂志和书籍输入代码上,这就是他如何从玩国际象棋转变为思考如何让机器像他一样“思考”的过程。几年后,他升级到了性能强劲得多的 Commodore Amiga。正是在 Amiga 上,他编写了第一个“真正的”人工智能程序——一个能击败他自己的黑白棋(Othello/Reversi)游戏版本。想到 1984年那个赢奖金买 48KB 电脑的大脑,在整整 40年后的 2024年,会因为利用 AI 绘制生命的“建筑蓝图”而获得诺贝尔化学奖,这真是一件令人着迷的事情。

当哈萨比斯在 13岁退出职业象棋界时,他并没有停止竞争,只是将重心转向了编程、模拟游戏和早期 AI 实验。他自学了汇编语言,这让他能够编写直接在计算机处理器上运行的代码,使程序运行得更快、更高效。他是 20世纪 80年代后期一小群“折腾”电脑的青少年中的一员,他们制作自己的游戏和软件工具。他买了戴维·列维写的《电脑象棋手册》,学习搜索算法和评估函数。他利用这些概念编写了一个黑白棋程序,并因在弟弟乔治身上测试该程序而闻名;程序成功击败了弟弟,哈萨比斯称这是他意识到 AI 潜力的重大“灵光一现”时刻。

在 Commodore Amiga 上编写那个黑白棋程序是德米斯第一个真正的“弗兰肯斯坦时刻”——他第一次制造出了能超越人类思维的东西。由于不能像今天的开发者那样直接下载 AI 库,他必须利用从国际象棋中学到的基础逻辑,从零开始构建“大脑”。为了让电脑“思考”,德米斯使用了一个经典的 AI 概念:极大极小算法(Minimax)。简单来说,Minimax 是一种用于双人游戏(如象棋、井字棋或黑白棋)以寻找最优解的决策算法。其名称源于核心目标:将最大可能损失降至最低(Minimizing the maximum possible loss)。它假设你的对手和你一样聪明,且总是会做出对他们最有利的选择。想象一棵决策树,“树干”是当前的棋盘位置,每个“分叉”都是一种可能的走法。你想要选择得分最高的分支(极大化 Max),而你的对手想要选择让你得分最低的分支(极小化 Min)。该算法从未来向后回溯:它预看未来的几个回合直到游戏结束(或达到一定深度),并为这些未来的棋盘打分(例如赢为 +10,输为 -10)。然后,它将这些分数向上逐级传递回当下。如果未来的某个分支因为对手足够聪明而必然导致你输球,算法就会“丢弃”那条路径,转而选择一条更安全的路径。如果你下一回合就要赢了,算法会在该分支看到 +10 分;但如果它发现移动到那里会给对手留下先赢的机会,它就会在那个分支看到 -10 分,从而选择避开这个 -10 分风险的走法。

可以把 Minimax 算法想象成一个极度谨慎的棋手,他预设对手是天才。它不只是在寻找赢的方法,还在偏执地寻找可能输的原因。Minimax 的核心信念是:对手总是会做出最优决策。如果一条路径有潜在的 +10(你赢),但其中一个分叉能让对手逼出 -10(你输),Minimax 会假设对手一定能找到那个 -10。它会将整条路径视为“失败路径”,哪怕其中一个版本藏着奖杯。假设你有 A 和 B 两个选择。选择 A,对手可能让你赢(+10),但也可能设陷阱让你输(-10);假设对手不傻,结果就是 -10。而选择 B,无论对手做什么,局面都是平局(0)。Minimax 会选择 B。尽管 A 有赢的机会,但算法会因为它存在被对手反制的必然性而将其“丢弃”。它宁愿选择无聊的平局,也不愿进行风险博弈。这是一种非常有趣的策略,我也一直在用。我总是假设对手和我一样聪明,甚至更聪明,虽然现实中很少如此,但这种思维方式能帮我找到最佳走法,尽管这需要耗费更多的精力。此外,我也很有自知之明,知道自己挺懒的,总是试图用最少的力气换取最大的成果。我不贪婪,是一个非常谨慎、求稳的策略家。与大众认知相反,我尽量避免过多的回合拉锯。在现实生活的博弈中,我通常会选择让对手无法翻身的走法。这种 Minimax 策略对我来说非常有意义,只是我以前从未这样总结过。

Minimax 的问题在于“状态空间爆炸”(State Space Explosion)。你可以把它理解为“可能性的诅咒”。这是电脑能轻易在井字棋中击败人类,却在围棋或模拟复杂化学反应中挣扎的主要原因。当一个系统的可能状态数量增长得如此迅速,以至于世界上最快的超级电脑也会耗尽内存和时间时,就会发生这种情况。我将其理解为源于混沌理论的混沌系统。这些系统是确定性的,即给定初始条件,输出总是相同。然而,当初始条件发生哪怕一点点变化,最终结果都会天差地别。这些系统对初始条件极其敏感(如天气),因此要预测输出,初始条件必须极其精确。混沌系统异常复杂,涉及太多因素,每个因素又以复杂且独特的方式演进,同时它们又以指数级的复杂方式相互作用,使得预测几乎不可能。从这个意义上说,地球本身可能就是一台电脑,而天气就是那种需要整个地球规模才能展开所有变量的确定性混沌系统——变量包括太阳、月亮、周围行星,甚至彗星、黑洞以及我们未知的万物。由于我们无法掌握所有信息,而初始条件又极大改变了结果,预测输出在本质上是不可能的。

在井字棋中,只有 255,168 种可能的对局,电脑可以在不到一秒内算完。但在国际象棋中,可能的走法比观测到的宇宙中的原子还要多。因此,年幼的德米斯不能只用“纯粹”的 Minimax。他必须使用启发式算法(Heuristics),也就是快捷方式。由于电脑无法看到游戏的终点,他编写了一个“评分函数”来告诉电脑:“即使游戏还没结束,控制棋盘中心也值 +5 分。”基本上,虽然他当时无法训练电脑自己学习最佳策略,但他把自己的国际象棋经验教给了电脑,比如尽早占据中心。德米斯有很多这样的策略。虽然控制中心是“金科玉律”,但这只是基础。一旦站稳脚跟,你就要执行更具体的策略。初学者最常见的输法是把国王留在棋盘中央,因此“王车易位”很重要,这能把国王移到由三个兵保护的角落,同时让车移向中心。棋子的价值取决于它控制的格子。马是短程武器,需要深入敌阵才有效。“哨位”是一个受自己兵保护且不能被敌方兵攻击的格子(通常在第 5 或第 6 横行),停在那里的马是对手的噩梦。车像狙击手,讨厌被自己的兵挡住,你需要把车移到“开放行”上。特级大师菲利多尔名言:“兵是象棋的灵魂。”它们不只是炮灰,更是战场的地形。互相保护的兵阵会形成阻挡对方象的“墙”。没有邻居保护的“孤兵”很难防守。同一列上的“重叠兵”则会互相阻碍。通过结合所有这些规则并赋予不同的权重和优先级,哈萨比斯构建出了能击败弟弟的黑白棋程序。这正是 DeepMind 后来颠覆的领域——过去,德米斯必须亲手输入这些“分数”;而到了 AlphaGo 时代,AI 通过深度学习自己学会了判断什么才是好的局面。

他让电脑预判当前棋盘上他能做的每一步动作,以及对手随后可能做出的每一步回应。即便在当时性能有限的硬件上,他仍试图让电脑预判 4 到 5 步。在黑白棋这种棋盘可能在瞬间反转的游戏中,这需要极高的计算效率。电脑能看到走法,但除非你告诉它,否则它不知道局面是好是坏。德米斯编写了一个数学评估函数——一套为特定位置打分的规则(比如黑白棋中至关重要的占据角位)。他实质上是将自己的战略直觉转化为了评分系统。德米斯让弟弟乔治坐在 Amiga 电脑前与代码对弈。当程序开始击败乔治时,德米斯意识到他创造了一个“数字版”的战略大脑。那是一个深刻的瞬间:他意识到智能并非人类特有的东西,而是一个可以被编码的过程。☀️