Created on

Updated on

Marvin Minsky(III): The Unsolvable XOR Gate

马文·明斯基 (III):不可解的 XOR 异或门

Preface: A little bit of math in this. Written in collaboration with Gemini.

I’m getting pretty confused as I dive deeper into this topic. I just wanted to know about Epstein, but Minsky is too interesting of a character to pass on. Looking at his publications and books, he almost seemed too early for his time. I agree with most of the things he’s proposing, however, I am not a mathematician to fully understand his proofs. If this was the guy that was serious about cryonics, and this is who Epstein proposed his baby ranch to, we can know for sure they were both onto something substantial, and they both put their words into actions with their brilliant, potentially morbid mind, in Epstein’s case. While Minsky used his mind to explore the future of human consciousness and the nature of our intelligence and agency, Epstein used his mind to play crossbreeding with girls like they were plants and roses in Animal Crossing. Minsky died preserved hoping to be woken up later, Epstein perhaps isn’t dead and hoping to wake up later as well, same wish, yet how two people went about it were so different. It tells me once again that there ain’t anything wrong with wanting to live forever, yet there’s a difference as to how we achieve that goal. Same with any sort of biological, technical advancements. The end does not justify the means, at least that’s what I think. If the process must inflict pain on the weaker, then it’s not about “transforming” human lives, it’s about abusing the less powerful with new technology. If the process is unjust, no matter what result we yield, it will not okay the process.

I’ve jumped around a lot when researching Minsky, I first wanted to find out what the infamous study of cryonics was about, only to find it is a very well researched, science-backed, up-and-coming industry that some people are going all in on. They are actively solving all the problems they can foresee, such as payment plans, putting your money in a trust so that even if you die the U.S. economy will pay for your “frozen body’s rent” in a cell, storage units being built… there’s a whole ecosystem of industry being put together. Yet that was hardly Minsky’s main focus or most famous work. He is most famous for his contribution to how we understand the human brain and artificial intelligence.

To explain his importance in the history of AI, let me first give you a rundown of the history of AI. The formal concept of AI emerged in the mid-20th century, focused on symbolic logic and the belief that machines could simulate human reasoning. Alan Turing published "Computing Machinery and Intelligence," proposing the Turing Test to determine if a machine could convincingly imitate human conversation. There’s a movie on this I’d recommend, called The Imitation Game, starring Benedict Cumberbatch. It’s a 2014 American biographical thriller film directed by Morten Tyldum and written by Graham Moore, based on the 1983 biography Alan Turing: The Enigma by Andrew Hodges. The film's title quotes the name of the game cryptanalyst Alan Turing proposed for answering the question "Can machines think?", in his 1950 seminal paper "Computing Machinery and Intelligence". It’s a pretty easy to digest dramatized intro to how Turing invented the first Automatic Computing Engine, Enigma, as a cipher device developed and used in the early- to mid-20th century to protect commercial, diplomatic, and military communication. It was employed extensively by Nazi Germany during World War II, in all branches of the German military.

In Alan Turning’s 1950 paper, "Computing Machinery and Intelligence", he proposed the famous Turning Test concept. It was designed to replace the abstract question "Can machines think?" with a more practical behavioral experiment. The test evolved from a Victorian parlor game called the "Imitation Game". In Turing's version, a human judge engages in a text-based conversation with two unseen participants: one human and one machine. The judge asks questions of both participants via computer terminal to avoid physical cues. If the judge cannot consistently tell the difference between the machine and the human, the machine is said to have "passed" the test and is considered to possess intelligence. There’s another great movie on what it looks like when a machine does pass the Turning Test, the famous Ex Machina, directed by Alex Garland. I consider this his greatest work so far, even though I pretty much loved all of his work.

Turing argued that "thinking" was too poorly defined to serve as a scientific goal. He shifted the focus from the machine’s internal "conscious" state to its external behavior, believing that if a machine could perfectly simulate human intelligence, it was effectively intelligent. The Turing Test profoundly changed how scientists viewed and developed artificial intelligence. It placed "natural language" at the center of AI research, leading scientists to focus on how machines could process and generate human speech. For decades, it led scientists to believe that intelligence was a matter of logic and symbol manipulation—if you could code enough rules to fool a person, you had built a mind. Early programs like ELIZA (1966) were built specifically to exploit human psychology to pass the test, showing scientists that "intelligence" could sometimes be faked through clever pattern matching rather than true understanding. However, later critics, such as Minsky in his work on Perceptrons, argued that "passing" a conversation test didn't mean a machine could actually solve complex problems or understand the world geometrically.

In 1956, six years after Turing proposed the Turing Test, John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon organized a conference, called The Dartmouth Workshop, which coined the term "Artificial Intelligence" and established it as a formal academic discipline. Researchers created programs that could solve algebra problems, prove logical theorems, and play games like checkers. In 1966, Joseph Weizenbaum created ELIZA, the first chatbot, which simulated a psychotherapist. By the early 1970s, the field faced a slowdown as computing power proved insufficient for the ambitious goals set by early pioneers. In 1969, Marvin Minsky and Seymour Papert published Perceptrons, which mathematically demonstrated the limitations of simple, single-layer neural networks (specifically their inability to solve problems like XOR). This work shifts research focus toward Symbolic AI (rule-based logic) for over a decade.

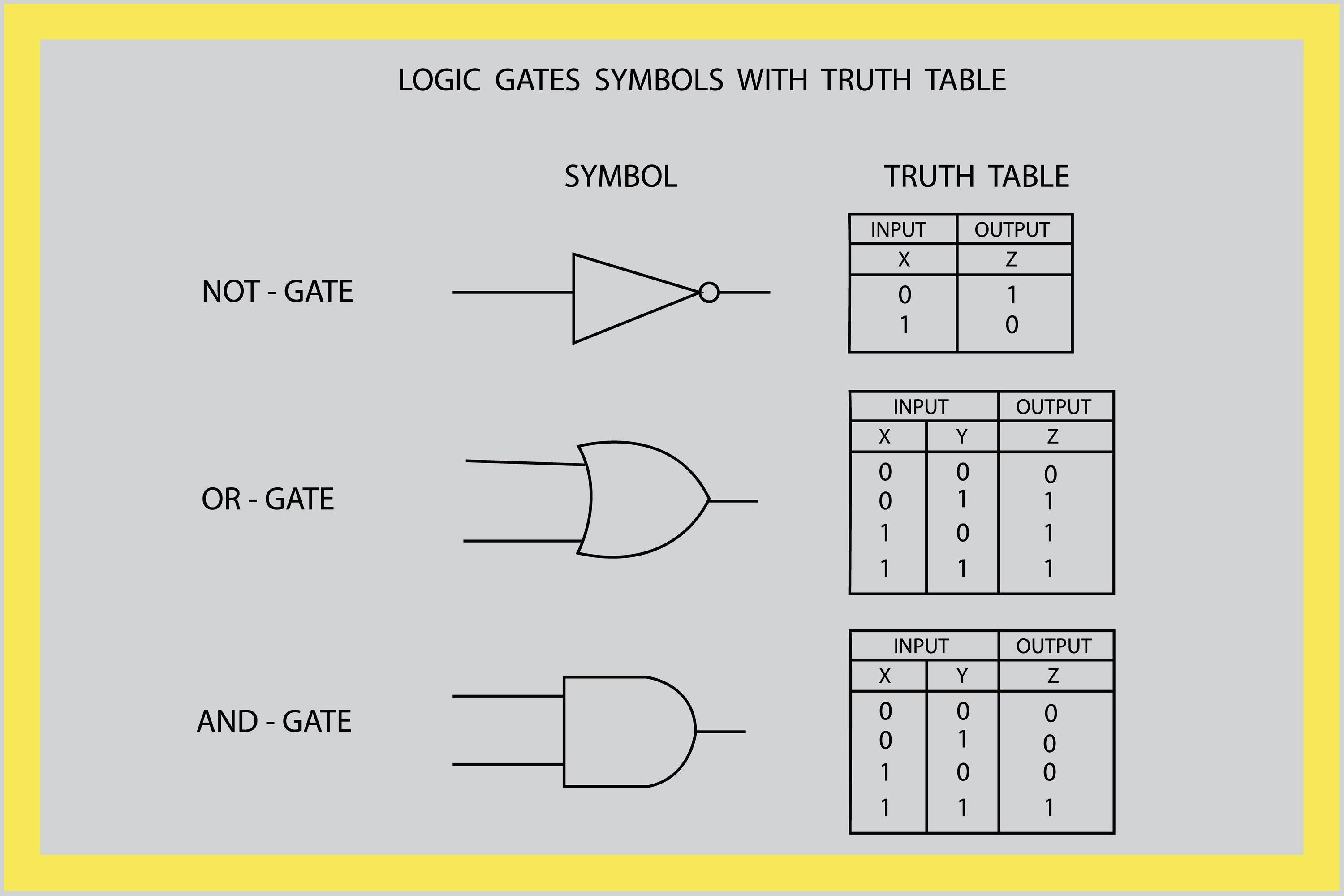

So the XOR problem proposed in Perceptrons goes something like this. To understand the problem, you first have to look at how simple logic gates work.

AND Gate: Outputs "1" only if both inputs are "1."

OR Gate: Outputs "1" if either or both inputs are "1."

XOR Gate: Outputs "1" if exactly one input is "1," but outputs "0" if both are "1" or both are "0."

In order to build artificial intelligence that can function like human brains, we have to start with the simplest logic, and we have to be able to translate the logic into circuit boards of diodes that can read in 1s and 0s, have the same input yield the same output as our brian logic. In the graph above, we can see that for NOT, the output should be opposite of input, for OR, as long as one of the inputs is not 0, output yields 1; and for AND, both inputs have to be 1 to yield 1, otherwise, it’s 0. This is consistent with our logic so far. However, the “XOR” gate, which is not shown in the graph, is not in the graph because it cannot be represented simply by a single diode. The “XOR” gate, checks if the two inputs are the same, which means that if the two inputs are the same, say “0 and 0” or “1 and 1”, output yields 0; but if they are different, “1” and “0”, doesn’t matter which comes first, it should yield 1, which means the two aren’t the same. This task of identifying if two inputs are the same is impossible to achieve by a single diode, as proven mathematically somehow, in the ways I don’t understand, in Minsky’s first book Perceptrons.

According to this book, XOR is not linearly separable. If you plot the four possible input points on a graph, the "True" results sit at opposite corners: (0,1)and (1,0). The "False" results sit at the other corners: (0,0) and (1,1). You cannot draw one single straight line that keeps the "True" points on one side and the "False" points on the other. Minsky and Papert proved that because of this, a simple perceptron is mathematically incapable of "learning" XOR—it literally cannot represent that logic in its architecture.

Gate | Condition for Output "1" | Perceptron Order | Can a single line separate it? |

AND | Both inputs are 1 +1 | 1 | Yes (Linearly Separable) |

OR | At least one input is 1 +1 | 1 | Yes (Linearly Separable) |

XOR | Exactly one input is 1 | 2 | No (Non-linearly Separable) |

The graph at the beginning of this article visualizes the fundamental mathematical hurdle that Minsky and Papert identified in early AI. A simple perceptron is a linear classifier. This means its decision-making logic is restricted to drawing a single straight line across this graph. As you can see visually, the "True" points sit at opposite corners from each other. No matter where you try to draw a single straight line, you will always end up with at least one "True" point on the same side as a "False" point. This just means that on a 2D plane, there will always be a point where the “false” and “true” interact, which makes that point both true and false at the same time, which makes this unsolvable on a 2D plane. However, it is possible to solve this on a 3D or high dimensional matrix or matrices. To solve the XOR problem, you have to move beyond a single line. The "solution" that eventually ended the AI Winter was the Multi-Layer Perceptron (MLP). Instead of one neuron trying to do everything, you add a hidden layer between the inputs and the output.

Think of the hidden layer as a way to create two different "lines" or "filters" that look at the data simultaneously. I don’t fully understand the layers, but I’m putting it here for context. According to this theory, we can have one hidden neuron acting like an OR gate, separating (0,0) from the other three points. Another hidden neuron acts like a NAND gate, separating (1,1) from the rest. A final neuron combines these two results. It essentially says: "If the first line is TRUE AND the second line is TRUE, then it's an XOR match". By using multiple layers, the machine is no longer restricted to a single flat plane. It can effectively fold or warp the mathematical space so that the "True" points end up on one side and the "False" points on the other. In the book, the authors actually acknowledged that multi-layer machines could solve these problems. However, they raised two major objections that remained true for nearly 20 years. While they knew the architecture worked, there was no known mathematical way to "teach" the hidden layers. They proved that as problems become more complex, the number of neurons and the size of the "weights" (coefficients) needed can grow exponentially, becoming physically impossible for computers of that era to handle. However, these problems were solved later with Backpropagation Algorithm and the rise of Graphics Processing Units (GPUs). More on this in the next post. ☀️

Reference / Recommended Readings

Historical & Technical Foundations

Minsky, M., & Papert, S. (1969). Perceptrons: An Introduction to Computational Geometry. MIT Press. (The foundational text that proved the XOR limitation and the scaling problems of simple neural networks).

Turing, A. M. (1950). "Computing Machinery and Intelligence." Mind, 59(236), 433–460. (The paper that proposed the "Imitation Game" or Turing Test).

McCarthy, J., Minsky, M. L., Rochester, N., & Shannon, C. E. (1955). A Proposal for the Dartmouth Summer Research Project on Artificial Intelligence. (The document that established AI as a formal discipline).

Weizenbaum, J. (1966). "ELIZA—A computer program for the study of natural language communication between man and machine." Communications of the ACM. (The first chatbot that simulated a psychotherapist).

Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). "Learning representations by back-propagating errors." Nature. (The breakthrough paper that provided the mathematical "Backpropagation" method to train multi-layer networks, solving the XOR problem).

Media & Popular Culture

Hodges, A. (1983). Alan Turing: The Enigma. Burnett Books. (The biography upon which the film The Imitation Game was based).

Tyldum, M. (Director). (2014). The Imitation Game [Film]. Black Bear Pictures.

Garland, A. (Director). (2014). Ex Machina [Film]. DNA Films.

To Understand Marvin Minsky’s Philosophy

"The Society of Mind" (1985) by Marvin Minsky: This is far more accessible than Perceptrons. Instead of math, he uses the metaphor of "agents"—tiny, mindless processes that work together to create an intelligent mind. It explains his view of how consciousness emerges.

"The Emotion Machine" (2006) by Marvin Minsky: His later work where he argues that emotions are not separate from thinking, but are actually just different "ways to think" that our brain uses to solve different types of problems.

To Understand the Ethical & "Morbid" Side of Futurism

"The Singularity is Near" by Ray Kurzweil: If you are interested in cryonics and living forever, Kurzweil (a contemporary of Minsky) explains the "technical advancement" perspective in detail.

"To Be a Machine" by Mark O'Connell: This is an excellent journalistic look at the transhumanist movement, cryonics, and the quest to solve death. It captures the "brilliant but potentially morbid" energy you mentioned.

To Demystify the "Hidden Layers" and Math

"But what is a neural network?" by 3Blue1Brown (YouTube): This is widely considered the best visual explanation of how the "hidden layers" and "Backpropagation" actually work without requiring a math degree. It visualizes the "warping of space" you described.

"Architects of Intelligence" by Martin Ford: A collection of interviews with modern AI pioneers (including those who "solved" Minsky's XOR problem) that discusses both the technical history and the ethical "means vs. ends" concerns of AI development.

前言:本文包含一点数学内容。由我与 Gemini 协作完成。

随着对这个话题的研究深入,我变得越来越困惑。我最初只是想了解爱泼斯坦(Epstein),但明斯基(Minsky)这个人物实在太有趣了,不容错过。翻看他的论文和著作,他简直像是一个超越了时代的人。我认同他提出的大部分观点,但我毕竟不是数学家,无法完全理解他的那些证明。如果他就是那个对人体冷冻技术(cryonics)深信不疑的人,而爱泼斯坦恰恰向他提议过那个“婴儿农场”计划,我们可以确定,这两个人都洞察到了一些实质性的东西,并且都用他们卓越的头脑将想法付诸实践——在爱泼斯坦的案例中,这种头脑带有潜在的病态。明斯基用他的智慧探索人类意识的未来,以及我们智慧与能动性的本质;而爱泼斯坦则把智慧用在与女孩进行“杂交”实验上,仿佛她们只是《动物森友会》里的植物和玫瑰。明斯基在死后被冷冻保存,希望能未来复活;爱泼斯坦或许也没死,同样希望能未来醒来。同样的愿望,两个人的实现路径却天差地别。这再次告诉我,渴望永生并没有错,错的是实现目标的方式。任何生物或技术进步也是如此。结果并不能证明手段的合法性,至少我是这么认为的。如果一个过程必须给弱者带来痛苦,那么它就不是在“改造”人类生活,而是在利用新技术虐待弱势群体。如果过程是不正义的,无论产出什么结果,都无法让这个过程变得合理。

在研究明斯基的过程中,我思维跳跃得很厉害。起初我想弄清楚那臭名昭著的人体冷冻研究是怎么回事,结果发现这是一个研究充分、有科学支撑、且正在兴起的行业,甚至有些人正全身心投入其中。他们正在积极解决预见到的所有问题,比如分期付款计划,或者把钱放进信托基金,这样即使你死了,美国经济也会为你保存在低温槽里的“冰冻身体”支付租金。存储库正在建设……一整套行业生态系统正在形成。然而,这几乎不是明斯基的核心关注点,也不是他最著名的成就。他最出名的是对人类大脑和人工智慧理解的贡献。

为了解释他在 AI 史上的重要性,先让我给你们梳理一下人工智慧的历史。正式的 AI 概念出现在 20 世纪中叶,重点在于符号逻辑(symbolic logic)以及相信机器可以模拟人类推理的信念。艾伦·图灵(Alan Turing)发表了《计算機器与智能》,提出了“图灵测试”(Turing Test)来判断机器是否能令人信服地模仿人类对话。我推荐一部关于他的电影,叫《模仿游戏》(The Imitation Game),由本尼迪克特·康伯巴奇主演。这是一部 2014 年的美国传记惊悚片,由莫滕·泰杜姆执导,格雷厄姆·摩尔编剧,改编自安德鲁·霍奇斯 1983 年创作的传记《艾伦·图灵:传》。电影标题引用了密码学家艾伦·图灵在他 1950 年的开创性论文《计算機器与智能》中为回答“机器能思考吗?”这一问题而设计的游戏名称。这是一部很容易消化的戏剧化入门片,介绍了图灵如何发明了第一台自动计算引擎,并将“恩尼格玛”(Enigma)作为 20 世纪早期到中期用于保护商业、外交和军事通信的加密设备。它在二战期间被纳粹德国广泛应用于所有军种。

在艾伦·图灵 1950 年的论文《计算機器与智能》中,他提出了著名的图灵测试概念。它的设计初衷是用一个更实用的行为实验来取代抽象的问题——“机器能思考吗?”。这个测试演变自一种名为“模仿游戏”的维多利亚时代客厅游戏。在图灵的版本中,一名人类裁判通过文本对话与两个看不见的参与者交流:一个是人类,一个是机器。裁判通过电脑终端提问以避免生理线索的干扰。如果裁判无法一致地分辨出机器和人类的区别,机器就被认为“通过”了测试,并被视为拥有智能。关于机器通过图灵测试后会发生什么,还有另一部伟大的电影,即著名的《机械姬》(Ex Machina),由亚历克斯·加兰执导。尽管我喜欢他几乎所有的作品,但我认为这是他迄今为止最伟大的作品。

图灵认为“思考”这个词定义太模糊,无法作为科学目标。他将重点从机器内部的“意识”状态转移到其外部行为上,认为如果机器能完美模拟人类智能,那它在实际上就是智能的。图灵测试深刻改变了科学家看待和开发人工智慧的方式。它将“自然语言”置于 AI 研究的核心,引导科学家关注机器如何处理和生成人类语言。几十年来,这让科学家相信智能就是逻辑和符号的处理——如果你能编写足够的规则来骗过人类,你就造出了一个头脑。早期的程序如 ELIZA(1966 年)专门利用人类心理学来通过测试,向科学家展示了“智能”有时可以通过巧妙的模式匹配而并非真正的理解来伪造。然而,后来的批评者,如明斯基在他的著作《感知器》(Perceptrons)中指出,通过对话测试并不意味着机器真的能解决复杂问题或在几何意义上理解世界。

1956 年,即图灵提出图灵测试六年后,约翰·麦卡锡、马文·明斯基、纳撒尼尔·罗切斯特和克劳德·香农组织了一次会议,名为“达特茅斯会议”,这次会议创造了“人工智慧”一词,并将其确立为一个正式的学术学科。研究人员创造了可以解决代数问题、证明逻辑定理和玩跳棋等游戏的程序。1966 年,约瑟夫·维森鲍姆创造了 ELIZA,这是第一个聊天机器人,它能模拟心理医生。到了 70 年代初,由于计算能力不足以支撑早期先驱们设立的雄心勃勃的目标,该领域面临增长放缓。1969 年,马文·明斯基和西摩·帕佩特出版了《感知器》,从数学上论证了简单的单层神经网络的局限性(特别是它们无法解决 XOR 异或问题)。这项工作使研究重心转向了符号 AI(基于规则的逻辑),并持续了十多年。

《感知器》中提出的 XOR(异或)问题大致是这样的。要理解这个问题,你首先得看看简单的逻辑门是如何工作的。

与门 (AND Gate):只有当两个输入都是“1”时,才输出“1”。

或门 (OR Gate):只要两个输入中有一个(或两个)是“1”,就输出“1”。

异或门 (XOR Gate):当输入中恰好只有一个是“1”时输出“1”,但如果两个都是“1”或两个都是“0”,则输出“0”。

为了构建能像人脑一样运作的人工智慧,我们必须从最简单的逻辑开始,并能将逻辑转化为由二极管组成的电路板,使其读入 1 和 0,产生与人脑逻辑相同的输入输出结果。在逻辑图中,我们可以看到,对于“非”(NOT),输出应与输入相反;对于“或”(OR),只要输入不全为 0,就输出 1;对于“与”(AND),两个输入必须都是 1 才能输出 1,否则为 0。这符合我们目前的逻辑。然而,图中未显示的“异或”(XOR)门无法简单地通过单个二极管表示。XOR 门检查两个输入是否相同,这意味着如果两个输入相同(如“0 和 0”或“1 和 1”),输出为 0;但如果不同(“1”和“0”,无论先后),它应输出 1,表示两者不同。这种识别两个输入是否相同的任务,无法通过单个二极管实现,这在明斯基的第一本书《感知器》中通过某种我不懂的方式在数学上得到了证明。

根据这本书,XOR 是线性不可分的(not linearly separable)。如果你在图表上标出四个可能的输入点,“真”的结果位于对角线的两端:(0,1) 和 (1,0);“假”的结果位于另外两个角:(0,0) 和 (1,1)。你无法画出一条直线把“真”点留在这一边,而把“假”点留在另一边。明斯基和帕佩特证明,正因如此,简单的感知器在数学上无法“学会”XOR——它的架构里根本无法表达这种逻辑。

逻辑门 | 输出为“1”的条件 | 感知器阶数 | 单条直线能否分隔? |

与 (AND) | 两个输入均为 1 | 1 | 是(线性可分) |

或 (OR) | 至少一个输入为 1 | 1 | 是(线性可分) |

异或 (XOR) | 只有一个输入为 1 | 2 | 否(线性不可分) |

本文开头的图表直观展示了明斯基和帕佩特在早期 AI 中发现的基础数学障碍。简单的感知器是一个线性分类器。这意味着它的决策逻辑仅限于在图表上画一条直线。如你直观所见,“真”点坐落在彼此相对的角落。无论你尝试如何画直线,总会至少有一个“真”点和“假”点落在同一侧。这仅意味着在 2D 平面上,总会有一个点让“假”和“真”产生重叠,使得该点在同一时刻既是真的又是假的,这导致其在 2D 平面上不可解。然而,在 3D 或更高维度的矩阵中,这是可以解决的。要解决 XOR 问题,你必须超越单一的一条线。最终结束“AI 寒冬”的方案是多层感知器(MLP)。不再让一个神经元承担所有工作,而是在输入和输出之间增加一个“隐藏层”。

你可以把隐藏层看作是创建两条不同的“线”或“过滤器”,同时观察数据。我不完全理解这些层,但放在这里作为背景参考。根据这个理论,我们可以让一个隐藏神经元充当 OR 门,将 (0,0) 与其他三点分开;另一个隐藏神经元充当 NAND(与非)门,将 (1,1) 与其他分开;最后由一个神经元组合这两个结果。它本质上在说:“如果第一条线是真的,且第二条线也是真的,那么这就是 XOR 匹配”。通过使用多个层,机器不再受限于单一的平面。它可以有效地折叠或扭曲数学空间,使“真”点落在一侧,而“假”点落在另一侧。在书中,作者其实承认了多层机器可以解决这些问题。然而,他们提出了两个维持了近 20 年的主要异议:虽然他们知道这种架构可行,但当时没有已知的数学方法来“教导”隐藏层;此外他们证明,随着问题变得复杂,所需的神经元数量和“权重”(系数)的大小会呈指数级增长,物理上超出了那个时代电脑的处理能力。不过,这些问题后来通过反向传播算法(Backpropagation Algorithm)和图形处理器(GPU)的兴起得到了解决。下一篇贴文会有更多内容。☀️

Reference / Recommended Readings

历史与技术基础

Minsky, M., & Papert, S. (1969). 《感知器:计算几何导论》。麻省理工学院出版社。(证明了 XOR 局限性和简单神经网络扩展问题的基础文本)。

Turing, A. M. (1950). “计算機器与智能”。《心智》(Mind)。(提出了“模仿游戏”或图灵测试的论文)。

McCarthy, J., Minsky, M. L., Rochester, N., & Shannon, C. E. (1955). 《达特茅斯人工智慧夏季研究项目建议书》。(将 AI 确立为正式学科的文件)。

Weizenbaum, J. (1966). “ELIZA——一个研究人机自然语言交流的电脑程序”。《ACM 通訊》。(第一个模拟心理医生的聊天机器人)。

Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). “通过误差反向传播学习表徵”。《自然》。(提供了训练多层网络的“反向传播”数学方法、解决 XOR 问题的突破性论文)。

媒体与流行文化

Hodges, A. (1983). 《艾伦·图灵:传》。Burnett Books。(电影《模仿游戏》改编的传记蓝本)。

Tyldum, M. (导演). (2014). 《模仿游戏》[电影]。黑熊影业。

Garland, A. (导演). (2014). 《机械姬》[电影]。DNA Films。

理解马文·明斯基的哲学

《心智社会》(The Society of Mind, 1985),马文·明斯基著:这本书比《感知器》通俗得多。他没有使用数学,而是使用了“代理人”(agents)的比喻——即微小的、无意识的过程协同工作,创造出智能头脑。它解释了他对意识如何产生的看法。

《情感机器》(The Emotion Machine, 2006),马文·明斯基著:他的后期作品,论证了情感并非独立于思考,而实际上是我们大脑用来解决不同类型问题的不同“思考方式”。

理解未来主义伦理及“病态”面

《奇点临近》,雷·库兹韦尔著:如果你对人体冷冻和永生感兴趣,库兹韦尔(明斯基的同代人)详细解释了这种“技术进步”的观点。

《成为机器》,马克·奥康奈尔著:这是一本关于超人类主义运动、人体冷冻和解决死亡探索的优秀新闻调研。它捕捉到了你提到的那种“才华横溢但潜在病态”的能量。

揭秘“隐藏层”与数学

“到底什么是神经网络?” (But what is a neural network?),3Blue1Brown (YouTube):这被广泛认为是关于“隐藏层”和“反向传播”如何运作的最佳视觉化解释,不需要数学学位即可看懂。它视觉化了你描述的“空间扭曲”过程。

《智能架构师》,马丁·福特著:访谈了现代 AI 先驱(包括那些“解决”了明斯基 XOR 问题的人),讨论了技术历史以及 AI 开发中伦理上的“手段与目的”之争。