Created on

Updated on

Marvin Minsky(IV): Backpropagation Algorithm and Graphics Processing Units (GPUs)

马文·明斯基 (IV):反向传播算法与图形处理器 (GPU)

Preface: Rise of GPU and how NVIDIA came to dominate the industry, with a little bit of intro to backpropagation algorithm. Co-written with Gemini.

If you are like me, someone who’s into arts and film and not at all tech, you probably didn’t hear about NVIDIA until maybe 2022, and you were probably like, who’s this Taiwanese called Jensen Huang that’s always wearing a leather jacket, and why the hell is the GPU industry so important and popping? If you were also like me, in that you’d like to procrastinate in doing everything, like all great artists do, like I’m writing and researching this instead of working on a screenplay, you probably didn’t spend time researching him despite your curiosity, so let me break it down for you. I’m learning this as I write. Gemini says, the popularity and eventual dominance of the Graphics Processing Unit (GPU) by NVIDIA resulted from a multi-decade transition from niche gaming hardware to the essential engine for artificial intelligence and scientific research. While no single person "invented" the concept, the technology evolved through several key milestones. In the 1970s-80s, arcade systems and early consoles used specialized graphics circuits to offload rendering tasks from the CPU. NEC μPD7220 (1982) was the first graphics display processor for personal computers integrated onto a single chip. 3Dlabs Glint Gamma (1997), A UK-based company, 3Dlabs, introduced the workstation-class "Glint Gamma," which they referred to as a "geometry processor unit" (GPU). Under CEO Jensen Huang, NVIDIA coined and popularized the acronym "GPU" (Graphics Processing Unit). They marketed the GeForce 256 as the "world's first GPU" because it was the first consumer-grade single-chip processor to handle complex transform and lighting (T&L) operations previously managed by the CPU.

The GPU's rise was driven by its unique ability to perform parallel processing—the capacity to execute thousands of simple mathematical operations simultaneously. The gaming revolution in the 1990s created demand for realistic 3D graphics in video games that required rapid manipulation of pixels, a task perfectly suited for parallel architecture. Beyond gaming, GPUs became essential for non-gaming tasks, such as Windows Vista’s graphical interface in 2006. GPUs were also found to be exceptionally efficient at the massive matrix and vector calculations required to train deep neural networks. This turned GPUs from "niche gaming parts" into essential hardware for every major data center. Jensen Huang co-founded NVIDIA in 1993, the year of the rooster, the year I was born. NVIDIA is as old as I am, I see. The company was conceived in 1993 at a Denny’s restaurant in San Jose, California. Jensen Huang, then an engineer at LSI Logic, met with Chris Malachowsky and Curtis Priem, both from Sun Microsystems, to discuss the future of computing. They believed the next wave of computing would be accelerated computing, specifically dedicated to solving problems that general-purpose processors (CPUs) couldn't handle efficiently. They chose video games as their first target market because gaming had a massive demand for high-performance graphics and offered a path to high-volume sales that could fund their research into more complex computational problems.

The early years were fraught with technical and financial peril. Their first chip, the NV1 (released in 1995), used a mathematical technique called "quadrilateral primitives" instead of the "triangles" that were becoming the industry standard. When Microsoft announced that its DirectX software would standardize on triangles, the NV1 became obsolete almost overnight. By 1996, the company was weeks away from running out of money. Huang had to lay off roughly half the staff and put all the company's remaining resources into a "hail mary" project: the RIVA 128. The RIVA 128 was a success, selling one million units in its first four months and saving the company. This set the stage for their major breakthrough in 1999 with the launch of the GeForce 256, which NVIDIA marketed as the "world's first GPU". NVIDIA's eventual dominance over competitors like 3Dfx and ATI was driven by Jensen Huang's unique management and technical strategies. While the rest of the industry released new chips every 18 months, Huang pushed NVIDIA to release new products every six months, effectively outrunning the competition. In 2007, the company released CUDA, a software layer that allowed its gaming chips to be used for general-purpose mathematical research. This was a massive financial risk that didn't pay off for nearly a decade, but it eventually made NVIDIA the default hardware for the AI revolution.

The transition from 2007 to the present is the era where NVIDIA shifted from being a "gaming company" to becoming the backbone of the global economy. This transformation was driven by a long-term bet on software called CUDA, which perfectly positioned them for the AI explosion. Jensen Huang believed that "accelerated computing" would eventually be needed for every industry, not just games. His realization that AI would be the defining future of the company crystallized around 2012 with the success of AlexNet. The world changed in 2012 with AlexNet, a neural network that won the ImageNet computer vision competition by a massive margin. AlexNet was trained using two NVIDIA GTX 580 GPUs. This proved to the scientific world that NVIDIA’s parallel processing was thousands of times more efficient than CPUs for training neural networks. Suddenly, every AI researcher in the world needed NVIDIA hardware.

They created specialized software (like cuDNN) for AI developers, ensuring that if you wanted to build AI, it worked best—and sometimes only—on NVIDIA. They began selling entire racks of servers (the DGX systems) rather than just individual cards. In 2020, NVIDIA acquired Mellanox for $7 billion. Mellanox specialized in high-speed data center networking, allowing NVIDIA to connect thousands of GPUs together into a single "giant GPU". NVIDIA became a household name when the world realized that the generative AI revolution (ChatGPT, Midjourney, etc.) was physically impossible without their chips. As of 2025, NVIDIA controls approximately 92% of the discrete GPU market and a similar share of the data center AI market. In 2024, NVIDIA’s market cap crossed $3 trillion, briefly making it the most valuable company in the world. While competitors like Intel and AMD viewed the GPU as a peripheral for video games, NVIDIA viewed it as a universal computer. By spending 15 years building the software (CUDA) and networking (Mellanox) to support that vision, they were the only ones ready when the "AI moment" finally arrived.

And that solved the Minsky’s second proposed problem in one of the two impossibles in his first book, Perceptrons, that is he proved that as problems become more complex, the number of neurons and the size of the "weights" (coefficients) needed can grow exponentially, becoming physically impossible for computers of that era to handle. With GPU, it is now possible to run those calculations. However, this doesn’t solve problem #1 yet, which was, let me remind you, even though they knew solving the XOR problem was impossible on a 2D plane, with hidden layers, it was possible to solve, yet there was no known mathematical way to "teach" the hidden layers to a machine. This was later also solved, by this algorithm called the Backpropagation.

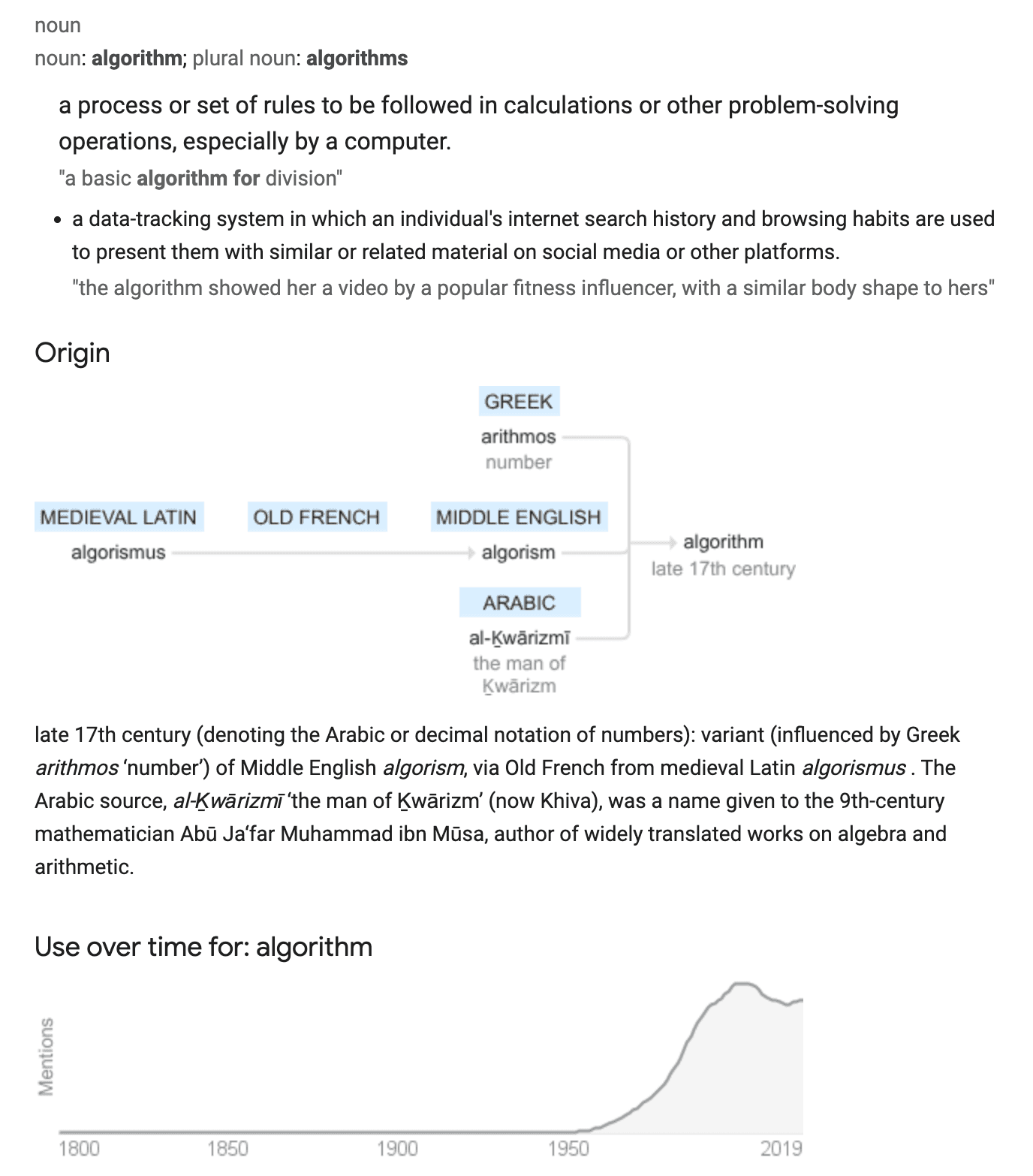

But first, let me explain what an algorithm is. In today’s context, when we talk about “algorithms” in tech, we are talking about an algorithm we can teach to the machine. Since the late 17th century (denoting the Arabic or decimal notation of numbers), it meant a variant (influenced by Greek arithmos ‘number’) of Middle English algorism, via Old French from medieval Latin algorismus . The Arabic source, al-Ḵwārizmī ‘the man of Ḵwārizm’ (now Khiva), was a name given to the 9th-century mathematician Abū Ja‘far Muhammad ibn Mūsa, author of widely translated works on algebra and arithmetic. But now, this word means, “a process or set of rules to be followed in calculations or other problem-solving operations, especially by a computer”, or “a data-tracking system in which an individual's internet search history and browsing habits are used to present them with similar or related material on social media or other platform”. It doesn’t just mean a process of calculations anymore, it means a process of calculations you can teach to a machine, not just humans.

The first time someone was able to teach an “algorithm” to a machine was Turning, as mentioned in the previous post, and the movie The Imitation Game. However, it was the second breakthrough that taught machines how to “figure out” a formula by itself. It was in 1959, Arthur Samuel developed one of the world's first successful examples of a "learning" algorithm by programming a computer to play checkers. This program was a landmark in AI because it demonstrated that a machine could achieve master-level performance through experience rather than just following rigid, pre-coded instructions. Samuel's program utilized a success-based reward system. It functioned by evaluating the state of the board and adjusting its strategy based on the outcome of its moves. The algorithm had to decide how much "credit" or "blame" to give to specific moves and strategies for a win or loss. It assigned "weights" to different board features by correlating them with eventual success. If the existing strategy (the "ingredients") was inadequate, the algorithm could test products of preexisting terms to invent new ways to evaluate the game board. Most notably, the program could play against itself, essentially creating a training loop that allowed it to learn at a rate much faster than playing against humans alone. Samuel’s work laid the groundwork for many concepts we see in modern AI. It proved that machines do not always need to be explicitly "programmed" for every scenario; they can be given a framework to learn from data.

In 1986, Backpropagation algorithm emerged. This algorithm provided the mathematical breakthrough needed to "teach" deep networks. The Backpropagation algorithm is the fundamental mathematical method used to train multi-layer neural networks by enabling them to learn from their errors. It effectively solved the "learning problem" that Minsky and Papert identified in 1969, which had previously limited AI to simple, single-layer tasks. The process involves a continuous loop of testing and adjustment. Data is fed into the network, passing through various "hidden layers" where each neuron applies a weight to the information before reaching an output. The network's output is compared against the correct answer to determine the "error" or "loss". The algorithm calculates how much each specific weight in the network contributed to that error. It "propagates" this error signal backward from the output layer through the hidden layers. Using calculus (specifically gradient descent), the algorithm tweaks every weight just enough to make the next prediction more accurate. This way, the algorithm can teach itself to be closer and closer to the expected result by adjusting the coefficients. This way, we have successfully given the machine instructions on how to figure out the math.

Teaching algorithms reached a "Big Bang" moment when massive datasets and increased compute power aligned. Unlike traditional sequential computers, modern GPUs (Graphics Processing Units) allow algorithms like backpropagation to run on millions of "agents" simultaneously. A GPU achieves massive parallelism by utilizing a "throughput-oriented" architecture designed to perform thousands of simultaneous operations, which is the physical realization of what Minsky called parallel computation. While a standard CPU (Central Processing Unit) is like a single scholar solving complex problems one by one (serial), a GPU is like a stadium full of students solving millions of simple math problems at the same time. More on parallel computation in the next post. ☀️

References & Recommended Reading

1. The NVIDIA Origin Story: From Gaming to "Universal Computer"

Hruska, J. (2014). "The History of the GPU: 1990s and Beyond." ExtremeTech.

Why read: A deep dive into the 3D graphics war. It covers the RIVA 128 (the "Hail Mary" project) and the GeForce 256—the chip that birthed the term "GPU."

CNBC Tech Reports (2023). "How NVIDIA Grew From Gaming to AI Giant."

Why read: Perfect for non-techies. It documents Jensen Huang’s pivotal "bet the company" moments, from the near-bankruptcy in 1996 to the launch of CUDA in 2007.

NVIDIA Press Archive (1999). "NVIDIA GeForce 256: The World’s First GPU."

Why read: Historical context on why "Transform & Lighting" (T&L) moving to a single chip was the first step toward the parallel processing power we use for AI today.

2. The 2012 "Big Bang": AlexNet and the Death of "Impossible"

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). "ImageNet Classification with Deep Convolutional Neural Networks."

Why read: This is the most important paper in modern AI. It describes how two NVIDIA GTX 580 GPUs solved the "impossible" complexity Minsky warned about in Perceptrons.

Viso Suite (2024). "AlexNet: Revolutionizing Deep Learning in Image Classification."

Why read: A clear breakdown of how AlexNet used ReLU (for speed) and GPUs (for scale) to crush the competition, proving that "brute force" math was the path forward.

3. Solving the "Math Problem": Backpropagation & Arthur Samuel

Samuel, A. L. (1959). "Some Studies in Machine Learning Using the Game of Checkers." IBM Journal.

Why read: The primary source for the first "learning" machine. It explains the "weights" and "scoring polynomials" you mentioned—the ancestors of modern neural network parameters.

Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). "Learning representations by back-propagating errors." Nature.

Why read: The math that saved AI. It describes how to "teach" hidden layers using calculus (gradient descent), finally solving Minsky’s XOR problem.

Thinkstack AI (2025). "What is the XOR Problem? Geometric and Representational Intuition."

Why read: A modern, visual explanation of why Minsky thought AI was "stuck" in 2D and how hidden layers allow us to "fold" the math into 3D to solve it.

4. The 2026 Landscape: Vertical Integration & The "Giant GPU"

Dell’Oro Group (Dec 2025). "Data Center Infrastructure in 2026: The Rise of Vera Rubin."

Why read: Outlines NVIDIA’s latest shift—from selling chips to selling "racks" of servers. It explains how the Mellanox acquisition turned thousands of small GPUs into one "giant GPU."

TechPolicy Press (2025). "NVIDIA’s Strategic Blueprint: The Generative Industrial Revolution."

Why read: Discusses Jensen Huang’s 2026 vision of "Sovereign AI" and why nations, not just companies, are now buying GPUs as if they were oil or gold.

🧬 Key "Artist-Friendly" Definitions

Parallel Computing (GPU): Like a stadium of 10,000 students each solving one simple addition problem at the same time.

Serial Computing (CPU): Like one genius professor solving a complex equation line by line.

Backpropagation: The "Review Session" where the machine looks at its wrong answer, sees which "neurons" caused the mistake, and nudges them to do better next time.

CUDA: The "Translator" that allows scientists to speak to a gaming chip and tell it to do physics or biology instead of just drawing 3D monsters.

前言:GPU 的崛起与 NVIDIA 如何统治行业,以及反向传播算法的简要介绍。由我与 Gemini 协作编写。

如果你和我一样,是一个热爱艺术和电影、对科技一窍不通的人,你可能直到 2022 年左右才听说过 NVIDIA(英伟达)。你当时可能会想:这个总穿皮夹克的、叫黄仁勋的台湾人是谁?为什么 GPU 行业突然变得这么重要、这么火爆?如果你也像我一样,喜欢把每件事都拖到最后一刻才做(就像所有伟大的艺术家一样——比如我现在就在写这个调研,而不是去写剧本),你可能虽然好奇,但并没花时间去研究他。那么,让我来为你拆解一下,我也是边写边学的。

Gemini 说,NVIDIA 的图形处理器(GPU)之所以能流行并最终统治市场,是因为它经历了几十年的转型:从非主流的游戏硬件,变成了人工智能和科学研究的核心引擎。虽然没有哪个人敢说自己“发明”了这个概念,但这项技术通过几个关键里程碑不断进化。在 20 世纪 70-80 年代,街机系统和早期游戏机使用专门的图形电路来分担 CPU 的渲染任务。1982 年的 NEC μPD7220 是第一款集成在单芯片上的个人电脑图形显示处理器。1997 年,一家名为 3Dlabs 的英国公司推出了工作站级别的“Glint Gamma”,他们称之为“几何处理单元”(GPU)。在首席执行官黄仁勋的领导下,NVIDIA 创造并推广了“GPU”(图形处理器)这个缩写。他们将 GeForce 256 营销为“全球首款 GPU”,因为它是第一款能处理复杂“变换与光照”(T&L)操作(以前由 CPU 负责)的消费级单芯片处理器。

GPU 的崛起是由其独特的“并行处理”能力驱动的——即同时执行数千个简单数学运算的能力。90 年代的游戏革命创造了对逼真 3D 图形的需求,这需要快速处理大量像素,而这种任务非常适合并行架构。除了游戏,GPU 在非游戏任务中也变得不可或缺,比如 2006 年 Windows Vista 的图形界面。人们还发现,GPU 在处理训练深度神经网络所需的超大规模矩阵和向量计算时异常高效。这让 GPU 从“小众的游戏零件”变成了每个大型数据中心必备的核心硬件。

黄仁勋在 1993 年共同创立了 NVIDIA,那年是鸡年,也是我出生的那一年。看来 NVIDIA 和我一样大。这家公司诞生于加州圣何塞的一家 Denny's 餐厅。当时在 LSI Logic 担任工程师的黄仁勋,与来自 Sun Microsystems 的 Chris Malachowsky 和 Curtis Priem 会面,讨论计算机的未来。他们相信下一波计算浪潮将是“加速计算”,专门解决通用处理器(CPU)无法高效处理的问题。他们选择视频游戏作为第一个目标市场,因为游戏对高性能图形有巨大需求,且能带来大量销量,从而支撑他们研发更复杂的计算问题。

早年间,公司充满了技术和财务危机。他们的第一款芯片 NV1(1995 年发布)使用了一种叫“四边形原语”的数学技术,而不是当时正成为行业标准的“三角形”。当微软宣布其 DirectX 软件将统一使用三角形标准时,NV1 几乎一夜之间就被淘汰了。到 1996 年,公司的资金只够维持几周。黄仁勋不得不解雇了大约一半的员工,并将公司剩余的所有资源投入到一个“孤注一掷”的项目中:RIVA 128。RIVA 128 获得了成功,前四个月就卖出了 100 万颗,救活了公司。这为 1999 年推出 GeForce 256 打下了基础,NVIDIA 将其宣传为“全球首款 GPU”。

NVIDIA 最终战胜 3Dfx 和 ATI 等竞争对手,归功于黄仁勋独特的管理和技术战略。当行业其他公司每 18 个月发布一次新芯片时,黄仁勋推动 NVIDIA 每 6 个月就发布一次新产品,有效地跑赢了竞争对手。2007 年,公司发布了 CUDA,这是一个软件层,允许其游戏芯片用于通用数学研究。这是一次巨大的财务冒险,近十年都没有回报,但它最终让 NVIDIA 成为了 AI 革命的默认硬件。

从 2007 年至今,是 NVIDIA 从一家“游戏公司”转变为全球经济支柱的时代。这一转变是由对名为 CUDA 的软件的长期赌注驱动的,这让它们在 AI 爆发时占据了绝佳位置。黄仁勋相信,“加速计算”最终将是每个行业都需要的,而不只是游戏。他在 2012 年左右通过 AlexNet 的成功,明确意识到 AI 将定义公司的未来。2012 年,AlexNet 彻底改变了世界,这个神经网络以巨大优势赢得了 ImageNet 计算机视觉大赛。AlexNet 是使用两块 NVIDIA GTX 580 GPU 训练出来的。这向科学界证明,在训练神经网络方面,NVIDIA 的并行处理效率比 CPU 高出数千倍。突然之间,全世界的 AI 研究者都需要 NVIDIA 的硬件了。

他们为 AI 开发者创建了专门的软件(如 cuDNN),确保如果你想构建 AI,它在 NVIDIA 上的运行效果最好(有时甚至是唯一选择)。他们开始销售整机柜的服务器(DGX 系统),而不仅仅是单张显卡。2020 年,NVIDIA 以 70 亿美元收购了 Mellanox。Mellanox 专精于高速数据中心网络,这让 NVIDIA 能够将数千个 GPU 连接在一起,形成一个“巨型 GPU”。当全世界意识到生成式 AI 革命(ChatGPT、Midjourney 等)离开英伟达芯片在物理上根本无法实现时,NVIDIA 成为了一个家喻户晓的名字。截至 2025 年,NVIDIA 控制了约 92% 的独立 GPU 市场和类似份额的数据中心 AI 市场。2024 年,NVIDIA 的市值突破了 3 万亿美元,曾一度成为全球市值最高的公司。当英特尔和 AMD 等竞争对手还把 GPU 看作游戏的周边外设时,NVIDIA 把它看作一台通用计算机。通过花费 15 年时间构建支撑这一愿景的软件(CUDA)和网络(Mellanox),当“AI 时刻”最终到来时,只有他们准备好了。

这也解决了明斯基在其第一本书《感知器》中提出的两个“不可能”中的第二个问题。明斯基证明了随着问题变得越来越复杂,所需的神经元数量和“权重”(系数)的大小会呈指数级增长,这超出了那个时代计算机的处理极限。有了 GPU,运行这些计算现在成为了可能。然而,这还没有解决第一个问题:提醒你一下,尽管他们知道在 2D 平面上解决 XOR(异或)问题是不可能的,但通过“隐藏层”可以解决,只是当时还没有已知的数学方法来“教”机器如何处理隐藏层。这个问题后来也被解决了,靠的是一种叫“反向传播”(Backpropagation)的算法。

但首先,让我解释一下什么是“算法”。在今天的科技语境下,我们谈论的算法是可以教给机器的算法。从 17 世纪末开始(指阿拉伯数字表示法),这个词源自中古英语 algorism,通过古法语源自中世纪拉丁语 algorismus。其阿拉伯语语源为 al-Ḵwārizmī,意为“花剌子模的人”,是赋予 9 世纪数学家阿布·贾法尔·穆罕默德·本·穆萨的名字,他撰写了关于代数和算术的广泛传播的著作。但现在,这个词的意思是“计算或其他解决问题操作中遵循的一过程或一组规则,尤其是由计算机执行的”,或者“一种数据追踪系统,利用个人的互联网搜索记录和浏览习惯在社交媒体或其他平台上向其推送相似或相关内容”。它不再仅仅意味着一个计算过程,它意味着你可以教给机器(而不仅仅是人类)的计算过程。

第一次有人能把一种“算法”教给机器是图灵,就像之前的文章和电影《模仿游戏》中提到的那样。然而,是第二次突破教会了机器如何自己“想出”公式。1959 年,亚瑟·塞缪尔(Arthur Samuel)通过编写一个下跳棋的程序,开发了世界上第一个成功的“学习”算法案例。这个程序是 AI 史上的里程碑,因为它证明了机器可以通过经验达到大师级水平,而不仅仅是遵循死板的预设指令。塞缪尔的程序利用了基于成功的奖励系统。它通过评估棋盘状态,并根据移动结果调整策略来运行。算法必须决定给特定的移动和策略多少“功劳”或“惩罚”。它通过将不同的棋盘特征与最终的胜利联系起来,为它们分配“权重”。如果现有的策略(“配方”)不够好,算法可以测试现有术语的组合,从而发明评估棋盘的新方法。最值得注意的是,程序可以自己跟自己下棋,本质上创建了一个训练循环,使其学习速度比单纯跟人类对战快得多。塞缪尔的工作为现代 AI 的许多概念奠定了基础。它证明了机器并不总是需要针对每种情况进行显式“编程”;可以给它们一个框架,让它们从数据中学习。

1986 年,反向传播算法(Backpropagation)出现了。这种算法提供了“教授”深度网络所需的数学突破。反向传播算法是训练多层神经网络的基础数学方法,它使网络能够从错误中学习。它有效地解决了明斯基和帕珀特在 1969 年提出的“学习问题”,此前这一问题将 AI 限制在简单的单层任务中。这个过程涉及一个持续的“测试与调整”循环。数据被输入网络,通过各种“隐藏层”,每个神经元在信息到达输出端之前都会对其施加一个权重。将网络的输出与正确答案进行比较,以确定“误差”或“损失”。算法会计算网络中的每个特定权重对该误差贡献了多少。它将这个误差信号从输出层通过隐藏层“反向传播”。利用微积分(具体说是梯度下降法),算法微调每个权重,使下一次预测更准确。通过这种方式,算法可以通过调整系数,教自己越来越接近预期结果。就这样,我们成功地给了机器关于“如何自己算明白这道数学题”的说明书。

当海量数据集与增强的计算能力相结合时,教学算法迎来了“大爆炸时刻”。与传统的顺序计算机不同,现代 GPU 允许反向传播等算法在数百万个“代理”上同时运行。GPU 通过利用“吞吐量导向”的架构实现了大规模并行,这种架构旨在同时执行数千个操作,这正是明斯基所说的“并行计算”的物理实现。如果标准的 CPU(中央处理器)像一个孤独的学者,一个接一个地解决复杂的难题(串行);那么 GPU 就像一个坐满了学生的体育场,同时在解决数百万个简单的数学题。关于并行计算的更多内容,请看下一篇。☀️

References & Recommended Reading

1. NVIDIA 的起源故事:从游戏到“通用计算机”

Hruska, J. (2014). "The History of the GPU: 1990s and Beyond." ExtremeTech.

推荐理由: 深度挖掘 3D 图形大战。涵盖了 RIVA 128(“孤注一掷”项目)和 GeForce 256——诞生了“GPU”这个词的芯片。

CNBC 科技报告 (2023). "How NVIDIA Grew From Gaming to AI Giant."

推荐理由: 非常适合非技术人员。记录了黄仁勋的关键“赌命”时刻,从 1996 年的几近破产到 2007 年 CUDA 的发布。

NVIDIA 新闻档案 (1999). "NVIDIA GeForce 256: The World’s First GPU."

推荐理由: 历史背景。解释了为什么将“变换与光照”(T&L)移至单芯片,是迈向今天 AI 所用并行处理能力的第一步。

2. 2012 年的“大爆炸”:AlexNet 与“不可能”的终结

Krizhevsky, A., Sutskever, I., & Hinton, G. E. (2012). "ImageNet Classification with Deep Convolutional Neural Networks."

推荐理由: 现代 AI 最重要的论文。描述了两块 NVIDIA GTX 580 GPU 如何解决了明斯基在《感知器》中警告过的“不可能”的复杂性。

Viso Suite (2024). "AlexNet: Revolutionizing Deep Learning in Image Classification."

推荐理由: 清晰解析了 AlexNet 如何通过 ReLU(提速)和 GPU(规模)击败竞争对手,证明了“暴力数学”才是出路。

3. 解决“数学问题”:反向传播与亚瑟·塞缪尔

Samuel, A. L. (1959). "Some Studies in Machine Learning Using the Game of Checkers." IBM Journal.

推荐理由: 第一台“学习”机器的第一手资料。解释了你提到的“权重”和“评分多项式”——现代神经网络参数的祖先。

Rumelhart, D. E., Hinton, G. E., & Williams, R. J. (1986). "Learning representations by back-propagating errors." Nature.

推荐理由: 拯救了 AI 的数学。描述了如何利用微积分(梯度下降)来“教授”隐藏层,最终解决了明斯基的 XOR 问题。

Thinkstack AI (2025). "What is the XOR Problem? Geometric and Representational Intuition."

推荐理由: 现代且直观的解释。说明了为什么明斯基认为 AI 被“困”在 2D 空间,以及隐藏层如何让我们将数学“折叠”进 3D 空间来解决它。

4. 2026 年的图景:垂直整合与“巨型 GPU”

Dell’Oro Group (2025 年 12 月). "Data Center Infrastructure in 2026: The Rise of Vera Rubin."

推荐理由: 概述了 NVIDIA 的最新转变——从卖芯片到卖“整机柜”服务器。解释了收购 Mellanox 如何将数千个小 GPU 变成一个“巨型 GPU”。

TechPolicy Press (2025). "NVIDIA’s Strategic Blueprint: The Generative Industrial Revolution."

推荐理由: 讨论了黄仁勋 2026 年关于“主权 AI”的愿景,以及为什么现在不仅是公司,连国家都在像买石油或黄金一样购买 GPU。

🧬 Key "Artist-Friendly" Definitions

并行计算 (GPU): 就像一个坐满一万名学生的体育场,每个人同时在做一道简单的加法题。

串行计算 (CPU): 就像一个天才教授,一行一行地解一道复杂的方程。

反向传播: 就像一个“复习环节”,机器看着自己的错误答案,找出是哪些“神经元”导致了错误,并督促它们下次做得更好。

CUDA: 一个“翻译官”,让科学家能和游戏芯片对话,告诉它去处理物理或生物问题,而不仅仅是画 3D 小怪兽。