Created on

Updated on

Filmmaking in the Age of AI(38): previsualization

one of Jurassic Park(1993)'s great achievements

Preface: Co-written with Claude.

Before a single camera rolls on a major VFX film, a version of that film already exists. It's rough, it's blocky, the characters look like crash test dummies and the environments look like architecture software. But the camera moves. The action plays out. The cuts are timed. The sequences that would otherwise cost a million dollars a day to figure out on set have already been figured out on a laptop. This is previsualization, and understanding it — along with its siblings techvis and postvis — is understanding how modern filmmaking actually works before the world sees it. It's essentially to understand that previsualization is not an option, but a must for VFX-heavy projects.

Modern previs is a fully animated 3D rough cut of a film's most complex sequences. The assets are deliberately low resolution — the characters are simple geometry, the environments are blocked shapes, the lighting is flat and functional. The point is not visual quality. The point is decision-making. In previs, the director works with a previs team — animators, a previs supervisor, sometimes a layout artist — to block out sequences shot by shot. Where is the camera? What lens? How does it move? Where are the characters in space relative to each other and the environment? How long does each shot last? What is the editorial rhythm of the sequence? Every one of those questions answered in previs is a question that doesn't need to be answered on set. On set, answering those questions costs time(yes, a lot of time). Time on a film set costs money — often hundreds of thousands of dollars a day. A director who walks into a complex action sequence without previs is making those decisions under maximum pressure with maximum cost attached. A director who walks in with locked previs is executing a plan, not discovering one.

Previs also serves as the communication document between the director and every other department. The production designer knows from previs what environments need to be built and to what extent. The VFX supervisor knows what needs to be practical and what needs to be digital. The stunt coordinator knows the choreography of action sequences and can design around the camera positions. The DP can prep their lighting approach knowing what the camera is doing. Everyone is looking at the same document. The previs doesn't get followed exactly. It almost never does. But the decisions made in previs give the production a baseline — a starting point — that keeps the shoot from drifting into expensive improvisation.

Previsualization didn't start with computers. It started with drawings. The first time it was extensively used was actually on Jurassic Park(1993). I was going to touch on this film and all the breakthrough it made, but I decided to talk pipeline first, so here it goes. Before previs, there was storyboarding — sketching a film shot by shot before production begins — has existed since the 1930s. Disney's animation department formalized it as a production tool, and it migrated into live-action filmmaking as a way to plan complex sequences that couldn't be improvised on set. Hitchcock famously storyboarded his films in granular detail before shooting. Kurosawa sketched his battle sequences by hand. The storyboard was the original previs — a way to make decisions about coverage, blocking, and editing before committing to the cost of production.

The 2D animatic came next. Take your storyboard panels, scan them, cut them together in an editing timeline with rough timing and temporary sound. Now you have a moving version of your sequence that plays in real time. Directors could watch their sequences unfold, adjust timing, make editorial decisions, and hand something to the department heads that communicated not just what individual shots looked like but how they flowed together. Animatics were standard practice by the 1980s and remain a valid tool today for sequences that don't require full 3D previs. The shift to digital 3D previs happened gradually through the late 1980s and early 1990s as workstations became powerful enough to run rudimentary real-time 3D. The first productions to use anything resembling modern digital previs were doing so with tools that were barely adequate — rough polygonal environments, no textures, placeholder characters. But the core value was there: you could move a virtual camera through a three-dimensional space and record what it saw.

Jurassic Park in 1993 was an early turning point. Spielberg's team prevized the dinosaur sequences digitally to work out how the CG animals would move through the live-action environments, where the cameras needed to be, and what the cuts would look like before any footage existed. This wasn't just planning — it was proof of concept. The previs told the production what was actually achievable and what the final film could look like.

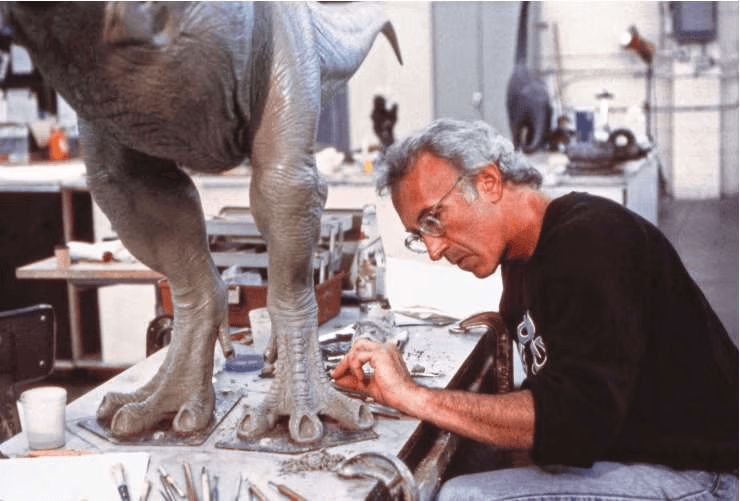

Spielberg originally hired Phil Tippett (who has a studio here in Berkeley:https://www.tippett.com) to create the dinosaurs using go-motion — an evolution of stop-motion that Tippett had refined on the Star Wars films, where rods attached to the model were moved during the exposure to introduce motion blur and give the animation a physical weight that traditional stop-motion lacked. Tippett's team began building and animating dinosaur models. That was the plan. The film was going to be made the way ambitious creature films had always been made — practically, physically, by artists manipulating real objects in front of a camera. This is the stop-motion made by Tippett back in the day. The actual shots stayed fairly close to the stop-motion previs.

Then two animators at ILM — Steve Williams and Mark Dippé — built a digital test without being asked to. They modeled a T-Rex skeleton and animated it walking, running, moving through a virtual space. They showed it to Dennis Muren, who showed it to Spielberg. The test ran for about thirty seconds. When it finished, Spielberg turned to Tippett and said the digital dinosaurs were going to replace the go-motion work. Tippett watched the test and said — and this line went directly into the film — "I think I'm extinct." He clearly wasn't though, especially considering how well his studio is doing.

What the ILM test demonstrated wasn't just that CG dinosaurs were possible. It was that they could move. Go-motion, however sophisticated, produced images of physical objects. The CG dinosaurs existed in a virtual three-dimensional space that the camera could move around freely, that could be lit from any direction, that could interact with a live-action environment without the constraints of physical photography. The creative possibilities were different in kind, not just degree. Does this make sense? think of it as previously you had to draw storyboards, and just hope whatever you shoot on set is as close as possible to the storyboards, so that CG that came in afterwards will magically work. There's a lot of uncertainty there, going from storyboard / stop-motion to post is quite a leap of faith. However, with previs, you can see how cameras, live-action actors, and CG characters move in the game engine.

CG in 1993 couldn't convincingly handle close detail under scrutiny — skin texture, surface imperfection, the specific way an animal's mass settles when it stops moving. Stan Winston's practical animatronics and full-scale puppet constructions handled those shots.

The CG handled the shots that required full-body motion at a scale and speed no practical construction could match — the T-Rex pursuing the Jeep in the rain, the Gallimimus stampede, the Brachiosaurus standing at full height against the sky. The film used roughly six minutes of digital dinosaur footage in total, which is not a lot of CG at all. This shows once again that we don't need a lot of CG to make a story convincing, the question is how we use it to make it most effective.

This was the specific value of digital previs that Jurassic Park proved: on a production combining live-action photography with digital elements that didn't exist yet, previs gave the director a way to see the finished shot before committing to how it would be built. You could look at a previs shot of the T-Rex attacking the car and decide whether the camera should be lower, whether the animal was too close, whether the cut should come earlier. Those decisions, made in previs, locked the approach before anyone spent a day shooting on location in Hawaii or a week rendering at ILM. The cost of a wrong decision in previs was a conversation. The cost of the same wrong decision on set or in post was measured in days and money.

The production that formalized previs as a department with its own infrastructure and crew was Star Wars: The Phantom Menace in 1999. George Lucas built a dedicated visualization group at ILM and used digital previs to plan virtually the entire film — action sequences, environments, creature interactions, everything. Lucas was essentially directing the film twice: once in previs and once on set. The previs became the blueprint that every department worked from. The podrace sequence, the Coruscant cityscapes, the lightsaber battles — all of it was worked out in three dimensions before a camera was pointed at Liam Neeson. After The Phantom Menace, dedicated previs companies began to emerge. The most significant was The Third Floor, founded in 2004, which became the dominant previs vendor in Hollywood and worked on films ranging from Avatar to the Marvel Cinematic Universe to the Star Wars sequels. What had been an in-house capability at a handful of major studios became an industry.

James Cameron pushed previs into new territory with Avatar in 2009. Cameron built a virtual production environment where he could operate a handheld camera in the real world and see its output translated in real time into the CG world of Pandora — with the characters, environments, and lighting all visible on a monitor as if the film had already been shot. He was directing previs the way a director directs an actor — intuitively, physically, in real time. This approach — the virtual camera — collapsed the distance between previs and production and pointed toward where filmmaking was going.

Techvis — technical visualization — is previs's more engineering-minded sibling. Where previs is primarily a creative tool for the director, techvis is a technical tool for the departments that have to execute the physical reality of what the director wants. Previs answers the question of what a sequence looks like. Techvis answers the question of how it gets built and shot in the real world. A techvis document for a complex action sequence might include the exact dimensions of the required camera crane, the position and travel path of the dolly track, the height of the greenscreen relative to the camera position at every point in the move, the sightlines that determine where practical set elements end and visual effects begin, and the positions of stunt rigs relative to camera to ensure they fall outside the frame. It's an engineering diagram more than a film. The DP uses techvis to plan physical camera rigs and lens choices. The special effects coordinator uses it to position practical effects elements — pyrotechnics, mechanical rigs — in relation to the camera and the actors. The VFX supervisor uses it to confirm that what the director wants in previs is achievable with the practical constraints of the shoot — that the greenscreen is large enough, that the tracking markers will be visible, that the reference capture can happen in the time available. Techvis emerged as previs became more sophisticated and productions discovered that there was a gap between what a sequence looked like in the virtual environment and what was physically achievable on stage or on location. The virtual camera can go anywhere. A real crane cannot. Techvis bridges that gap by translating creative intent into physical specifications.

Postvis — post-visualization — operates at the other end of production. Where previs happens before the shoot, postvis happens after it, during the editing and post-production phase. When footage comes back from the shoot, the edit contains gaps — shots where VFX work will eventually live but doesn't exist yet. Those gaps might be held by a black frame, a previs shot, or a piece of reference footage. They are placeholders. The film can't be screened, evaluated, or finished with placeholders in it. Postvis fills those gaps with rough, quickly assembled digital shots that approximate the final result well enough for the edit to function as a complete film. Postvis is faster and cheaper than final VFX but more refined than previs. The characters might have basic textures. The environments might have rough lighting. The compositing might be rough. The goal is legibility — making it clear enough what the finished shot will look like that the director and editor can make cutting decisions, that the studio can screen the film and give notes, and that the sound department has something close to the final image to design against.

Postvis has a complicated relationship with the final VFX work. On one hand it's essential — without it the film can't be evaluated or completed. On the other hand, directors and editors sometimes become attached to postvis solutions and carry creative decisions made under the constraints of rough, fast work into the final VFX. A cut that was built around a postvis shot may not work as well with the final version. An editorial choice that seemed to work with rough postvis characters may not hold when the finished animation reveals something different about the performance. The VFX supervisor and the editor have to stay alert to this, treating postvis as a scaffold to be removed rather than a foundation to build on. Postvis has also blurred into what used to be called temp VFX — the broader category of rough work assembled during post to allow the film to function. The distinction has become less meaningful as postvis tools have improved and the line between rough and finished has moved. What gets called postvis on one production might be called temp on another. The underlying function is the same: keeping the film alive and evaluable while the final work is being built.

On a modern studio VFX film, previs, techvis, and postvis form a continuous visualization track that runs alongside the film from development through delivery. Previs plans the sequences before the shoot. Techvis translates the plan into physical specifications during prep. Postvis maintains the film's coherence during post while final VFX are completed. The director who understands all three is a director who never loses control of the film. Previs means you walk onto set with a plan. Techvis means that plan is physically executable. Postvis means you can see and evaluate what you're building before it's finished. Each one is a tool for maintaining creative authority over work that, without them, would be happening too far from the director's hands to influence. The director who treats previs as an optional step, who doesn't engage with techvis, who doesn't pay attention to postvis until the final shots arrive — that director is ceding control of their film to the people who fill those gaps. Let's move onto virtual production next, see how all these viz work together, and why was virtual production essential on Avatar(2009). 🫶🏼